AI data centers power requirements are reshaping global energy infrastructure, with demand projected to increase 165% by 2030.

Key factors driving this transformation include:

- Power density increases from 8 kW per rack to current averages of 17–30 kW

- Cooling systems now consuming 38–40% of total energy, more than double the kW required for traditional facilities

- Geographic concentration creating unprecedented strain on regional power grids and transmission infrastructure

- Renewable energy integration becoming essential as hyperscalers commit to carbon-neutral operations

Organizations must develop comprehensive power strategies now to avoid infrastructure constraints limiting AI deployment capabilities.

The artificial intelligence revolution has created an energy challenge unlike anything the technology industry has faced before. Data centers supporting AI workloads now require unprecedented amounts of continuous power, changing how organizations must approach infrastructure planning. The International Energy Agency projects that global data center electricity consumption will more than double by 2030 to reach 945 terawatt-hours.

AI data centers power modern computing through specialized hardware that operates at densities and utilization rates that legacy infrastructure was never designed to handle. Understanding these requirements has become vital for any organization planning to deploy AI at scale, whether for training large language models, running real-time inference, or supporting the next generation of intelligent applications.

Goldman Sachs Research estimates that AI electricity demand could increase by 50% by 2027. Organizations that fail to secure adequate power infrastructure today risk finding themselves unable to compete in an AI-driven marketplace.

Why Are AI Data Centers Power Requirements Fundamentally Different?

Traditional data centers were designed around predictable workloads with relatively modest power requirements. A typical enterprise server rack might draw 5–10 kilowatts of power, with usage patterns that peak during business hours and drop significantly overnight. AI workloads have shattered these assumptions completely.

AI Power Densities Vs Traditional Computing

Modern AI training facilities require power densities of 40–100+ kilowatts per rack, representing a 4–10x increase from conventional requirements. These systems run at maximum capacity around the clock, often for weeks at a time during model training sessions. The continuous nature of AI workloads means there are no off-peak periods to provide relief for power systems or cooling infrastructure.

Power-Intensive AI Hardware

The computational architecture driving these requirements centers on Graphics Processing Units (GPUs) and specialized AI accelerators. Unlike traditional CPUs that handle sequential processing, AI chips perform thousands of parallel operations simultaneously. This parallel processing capability is essential for training neural networks, but it comes with enormous power requirements. A single high-performance GPU cluster can consume as much electricity as hundreds of traditional servers.

Cooling Requirements Compared to Overall Power Consumption

Heat generation becomes another challenge when AI data centers power these intensive workloads. Cooling systems now account for 38–40% of total data center power consumption. This additional cooling requirement effectively doubles the infrastructure impact of AI workloads.

Hyperscalers like Microsoft, Google, and Amazon are collectively spending $230 billion annually on AI infrastructure, with projections exceeding $320 billion by 2025. These figures make up the largest infrastructure investment wave in modern computing history.

How Does AI Electricity Demand Vary Across Different Workloads?

AI electricity demand varies depending on the specific workload and deployment model. These differences impact accurate capacity planning and cost forecasting.

Power Requirements for AI Training vs. Inference

Training workloads are the most energy-intensive category of AI operations. Large language models like GPT-4 or multimodal systems require massive computational resources during their training phase. Training a single large language model can consume over 50 megawatt-hours of electricity over several weeks or months.

Inference workloads, while less intensive per query, create different challenges due to their scale and variability. A single ChatGPT query requires approximately 2.9 watt-hours of electricity compared to 0.3 watt-hours for a traditional Google search. While this difference seems modest, it becomes significant when multiplied across billions of daily queries. The unpredictable nature of inference demand also complicates power planning, as usage can spike based on user behavior or application popularity.

Specialized AI Applications Impacting Power Planning

Specialized AI applications introduce additional complexity. Computer vision systems processing real-time video streams require continuous high-performance computing. Natural language processing for customer service applications must maintain low latency while handling variable query volumes. Generative AI applications for content creation combine the computational intensity of training with the scale challenges of inference.

Geographic Patterns Driving AI Electricity Demand

The geographic distribution of AI adds another layer of complexity. The United States, Europe, and China account for nearly 85% of data center electricity consumption. This concentration creates intense pressure on regional power grids and transmission infrastructure.

Understanding these demand patterns enables more strategic infrastructure planning. Organizations can optimize their power procurement strategies based on their specific AI workload mix, potentially reducing costs while ensuring adequate capacity for future growth.

What Is the Scale Challenge: From Megawatts to Gigawatts?

The scale of power requirements for modern AI infrastructure has pushed beyond the capacity of traditional data center planning. Where organizations once thought in terms of megawatts, leading AI deployments now require gigawatt-scale power planning. This increase in scale creates new challenges for infrastructure developers and the organizations deploying AI systems.

Individual AI Facilities Compared to Cities in Power Consumption

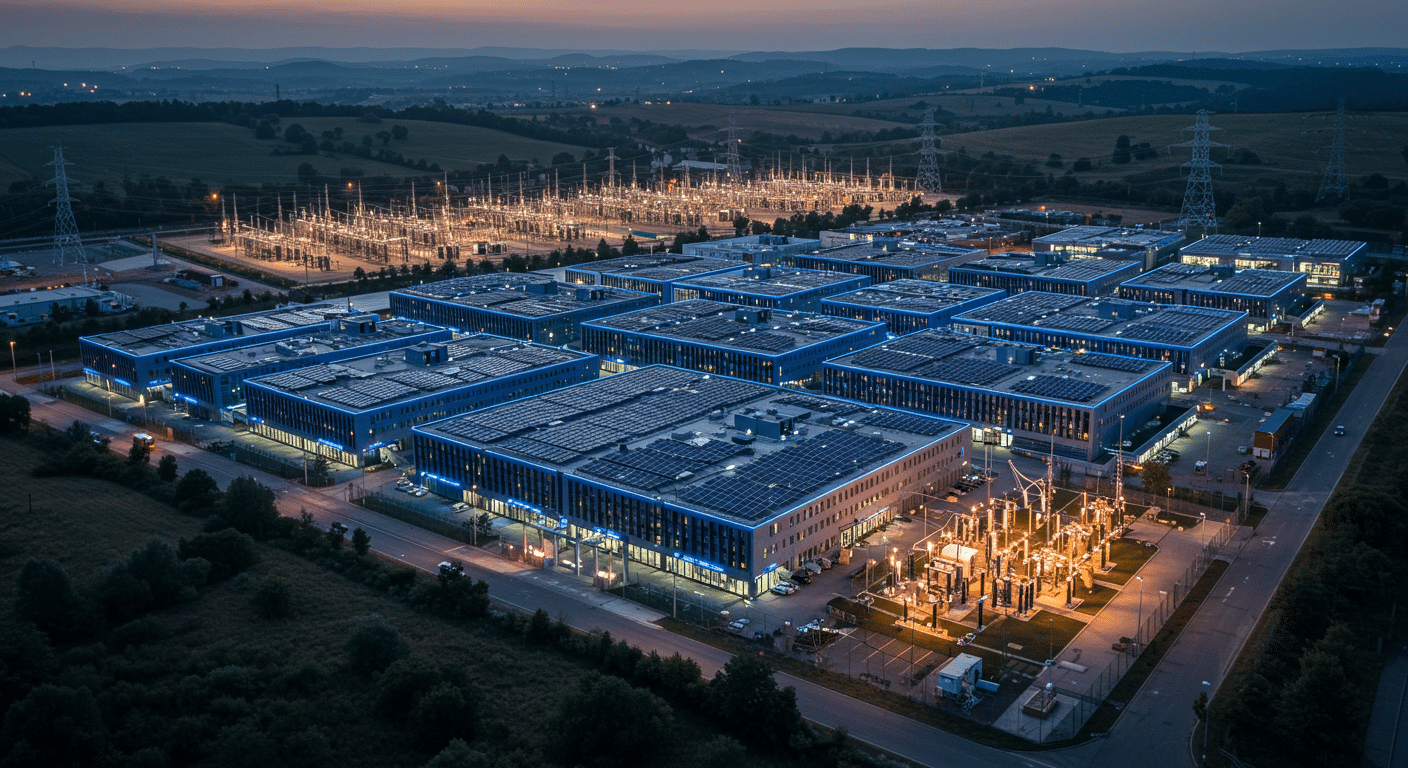

Individual AI data center campuses now routinely require 200–500 megawatts of continuous power, with some hyperscaler facilities planned for over 1 gigawatt. To put this in perspective, a gigawatt data center consumes as much electricity as a major metropolitan area.

Infrastructure Challenges of Gigawatt-Scale Power

Electrical transmission infrastructure must be upgraded or built from scratch to deliver such massive amounts of power. Utility companies must plan generation capacity years in advance, often requiring new power plants or renewable energy installations. The lead times for major electrical infrastructure can extend 5–7 years, making early planning essential.

Geographic Concentration Impacts Grid Stability

The concentration of AI workloads exacerbates these challenges. Rather than distributing power demand across many locations, AI deployments tend to cluster in specific regions with favorable conditions. Northern Virginia, Silicon Valley, and major metropolitan areas in Texas have become focal points for AI infrastructure investment, creating strain on local power grids.

Grid stability becomes a critical consideration at these scales. Traditional power systems were designed to handle gradual changes in demand throughout the day, with residential and commercial usage following predictable patterns. AI workloads create sustained, high-density demand that can destabilize regional grids without careful planning and integration.

The economic implications of gigawatt-scale power planning are equally significant. Global capital investments of $5.2 trillion may be required just to support projected AI data center growth through 2030. These investments must be coordinated between private sector AI developers, utility companies, and regional planning authorities.

What Are the Best Power Optimization Strategies for AI Workloads?

Successfully powering AI workloads at scale requires optimization strategies that address both efficiency and reliability. Organizations can’t simply scale up traditional approaches; they need new methodologies designed for the unique characteristics of AI electricity demand.

Advanced Power Management Systems that Reduce Energy Waste

Modern AI facilities employ intelligent power management systems that dynamically allocate electricity based on real-time workload requirements. These systems can shift processing tasks to optimize power usage throughout the facility, reducing peak demand and improving overall efficiency. Smart power distribution systems monitor individual rack consumption and automatically balance loads.

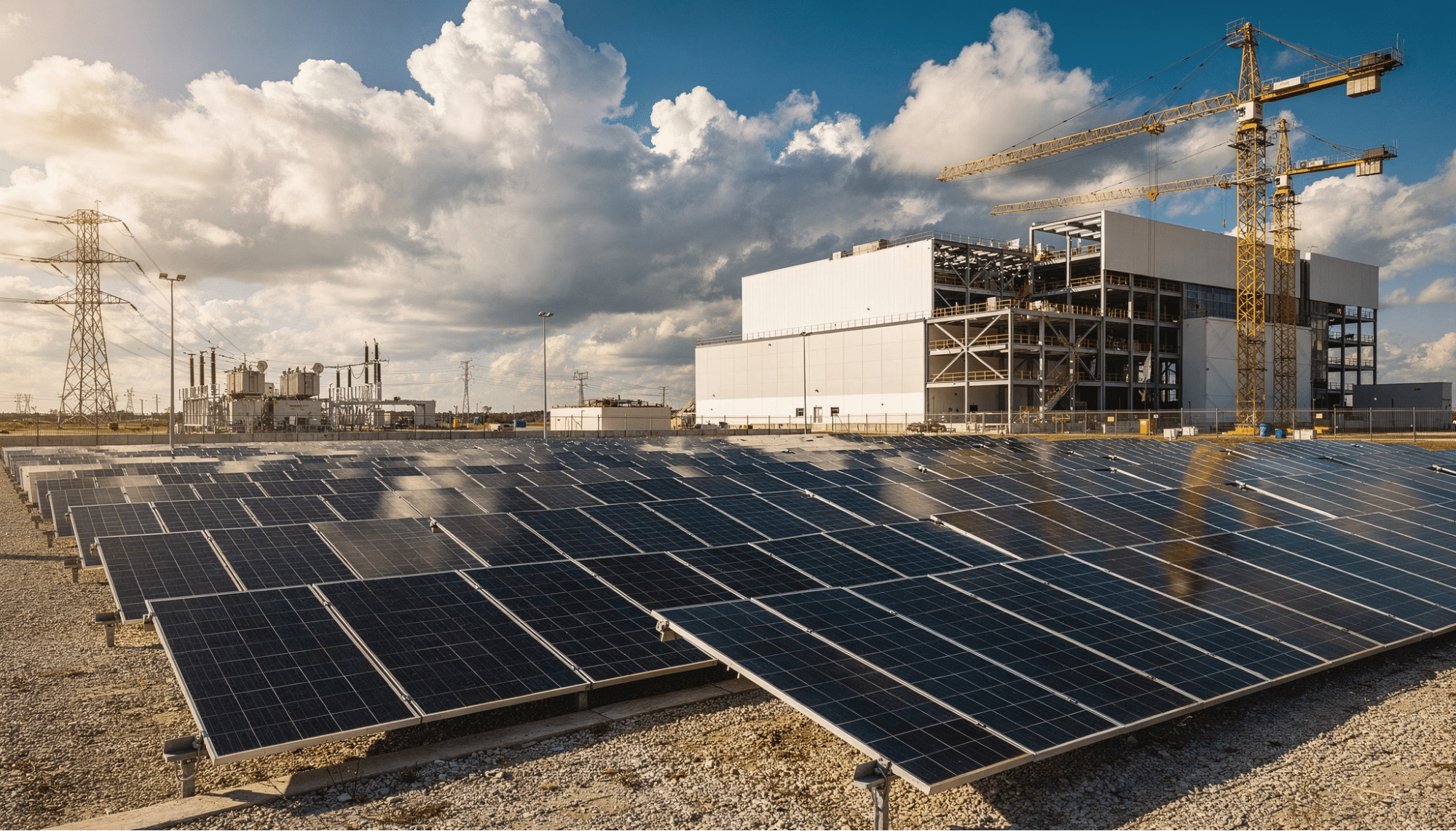

Modular Infrastructure Design for Scalable AI Energy

Scalable AI energy infrastructure benefits from modular design principles that enable rapid deployment and flexible scaling. Rather than building massive facilities upfront, organizations can deploy standardized power modules that can be connected and activated as demand grows. This approach reduces initial capital requirements while maintaining the flexibility to expand quickly when needed.

Workload Scheduling in Power Optimization

Strategic scheduling of AI training workloads can reduce peak power demand and associated costs. By analyzing electricity pricing patterns and grid demand forecasts, organizations can time intensive training sessions during off-peak hours when power is both cheaper and more environmentally friendly. Advanced scheduling systems can automatically pause and resume training based on power availability and cost thresholds.

Innovative Cooling Technologies to Reduce Power Overhead

Innovative cooling technologies can reduce the power overhead associated with thermal management. Liquid cooling systems, while requiring higher initial investment, can reduce cooling-related power consumption. Some facilities are experimenting with immersion cooling, where servers operate in specialized non-conductive fluids, eliminating the need for fans and reducing overall consumption.

Benefits of Renewable Energy Integration

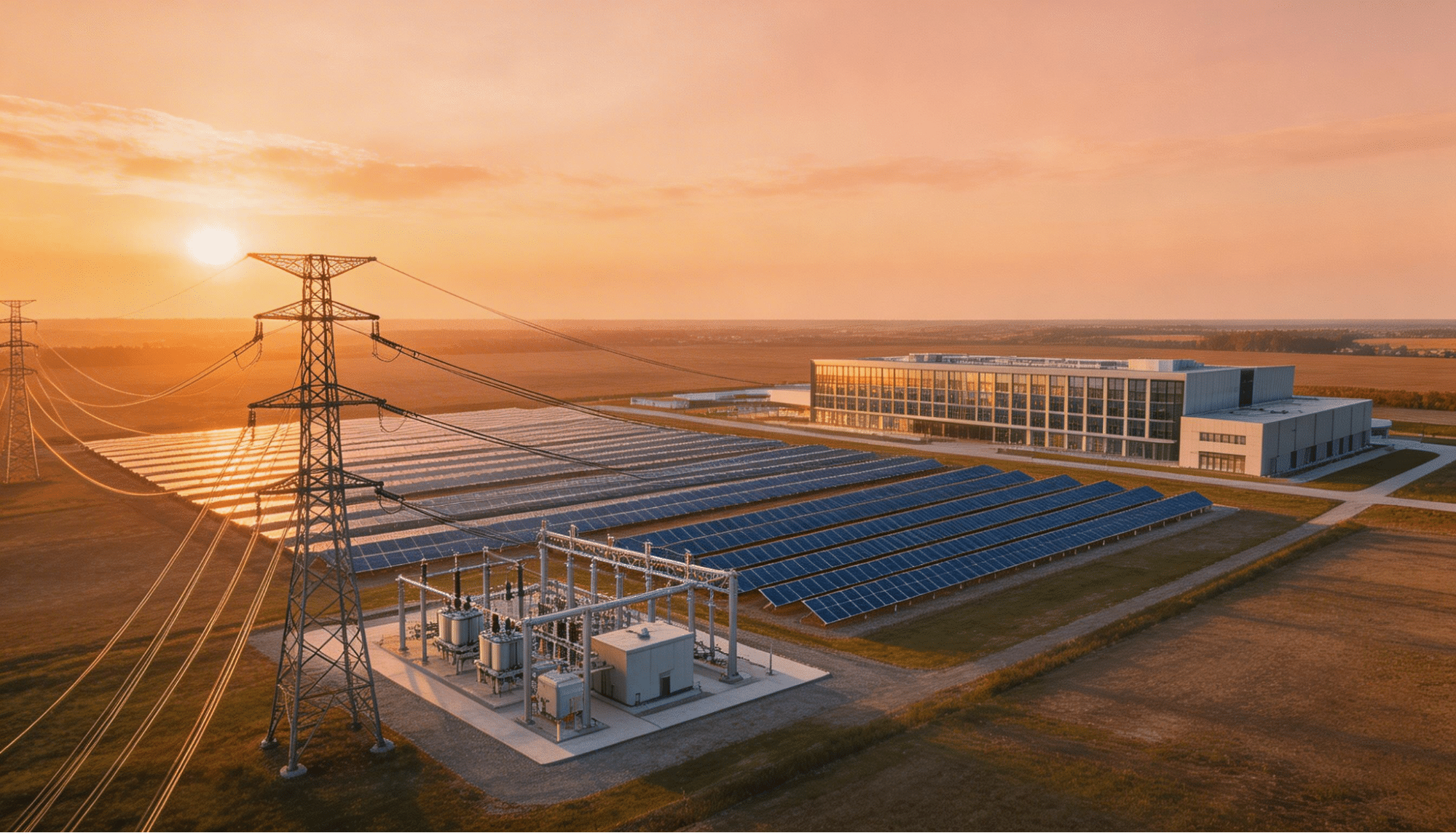

Direct integration of renewable energy sources offers both cost and sustainability advantages for AI workloads. On-site solar installations and battery storage systems can provide predictable, clean power while reducing dependence on strained electrical grids. Organizations are pursuing power purchase agreements (PPAs) that guarantee renewable energy access at fixed prices over multi-year periods.

These optimization strategies work best when implemented as part of a comprehensive power planning approach rather than individual solutions. The most successful AI infrastructure projects integrate multiple techniques while maintaining the flexibility to adapt.

How Do You Build Scalable AI Energy Infrastructure?

Creating infrastructure that can adapt to the exponential growth patterns of AI requires forward-thinking approaches to energy system design. Traditional infrastructure planning based on gradual, predictable growth patterns proves inadequate for AI workloads that can scale from proof-of-concept to production deployment in months rather than years.

Site Selection Strategies for AI Power Requirements

The foundation of scalable AI energy infrastructure lies in comprehensive planning that addresses immediate deployment needs and long-term expansion.

Leading organizations are choosing data center locations based primarily on access to abundant, reliable electricity rather than proximity to population centers or existing technology hubs. This power-first approach enables faster deployment while reducing long-term operational costs.

Transmission Infrastructure Planning

Transmission infrastructure planning becomes critical at the scales required for AI deployment. Organizations must evaluate the current power availability and the capacity of electrical transmission systems to deliver additional power as requirements grow. This evaluation includes understanding regional transmission planning processes, identifying potential bottlenecks, and ensuring adequate capacity for multi-gigawatt scale operations.

Energy Source Diversification

Energy source diversification provides reliability and cost advantages for scalable AI systems. Rather than relying entirely on grid power, successful deployments incorporate multiple energy sources, including on-site renewable generation, battery storage systems, and backup power capabilities. This diversified approach reduces vulnerability to grid outages while providing options for cost optimization based on real-time conditions.

Regulatory Coordination

Large power consumers must navigate complex approval processes for electrical interconnections, environmental reviews, and local zoning requirements. Early engagement with regulatory authorities can prevent delays that might constrain AI deployment timelines.

The most successful scalable AI energy implementations incorporate flexibility mechanisms that enable rapid expansion without major infrastructure redesign. Consider designing electrical systems with expansion capacity built in from the initial deployment, establishing relationships with multiple power suppliers, and maintaining optionality for additional renewable energy procurement as requirements grow.

Financial planning must account for the long-term nature of power procurement while maintaining flexibility for changing requirements. Power purchase agreements, infrastructure investment partnerships, and flexible capacity arrangements provide different mechanisms for balancing cost predictability with operational flexibility.

What Are the Geographic Characteristics to Consider for AI Power Planning?

The geographic concentration of AI workloads creates unique challenges and opportunities for power planning. Unlike traditional computing workloads that can be distributed globally, AI training and many inference applications require concentrated, high-performance infrastructure that intensifies regional power demands.

Regional Power Grid Characteristics

Regional power grid characteristics impact AI deployment strategies. States like Texas and Wyoming offer abundant renewable energy resources and favorable regulatory environments for large power consumers. The Pacific Northwest provides access to hydroelectric power and established technology infrastructure. Each region presents different advantages and constraints for AI electricity demand.

Grid Interconnection Timelines

Grid interconnection availability has become a primary constraint in established technology markets. Northern Virginia, despite its established data center ecosystem, now faces utility connection wait times exceeding three years for new large loads. Silicon Valley encounters similar constraints, forcing organizations to consider alternative locations or accept significant deployment delays.

Climate and Environmental Factors

Climate considerations affect cooling requirements and renewable energy potential. Cooler climates reduce the energy overhead for cooling systems, while regions with high solar or wind resources provide opportunities for cost-effective renewable energy integration.

Regulatory Environments Across Jurisdictions

Regulatory environments vary across different jurisdictions, affecting development timelines and operational costs. Some states offer tax incentives for data center investment, while others impose restrictions on large electricity consumers. Understanding these regulatory differences enables more strategic site selection decisions.

International Considerations for Global AI Deployments

International considerations add additional complexity for global AI deployments. European markets face different regulatory requirements around data sovereignty and environmental impact. Asian markets offer different cost structures and infrastructure capabilities. Multinational organizations must balance these geographic factors with technical requirements and business objectives.

The emergence of secondary markets for AI infrastructure reflects these geographic pressures. Regions like Ohio, Indiana, and North Carolina are attracting AI infrastructure investment due to their combination of available power, favorable costs, and supportive regulatory environments. These markets often provide faster deployment timelines than established technology hubs.

How Can You Future-Proof Your AI Power Strategy?

AI technology requires power strategies that can adapt to changing requirements while maintaining cost-effectiveness and reliability. Organizations must balance current needs with anticipated future developments in AI capabilities and infrastructure requirements.

Technology roadmaps suggest that AI power requirements will continue growing as model sizes and capabilities expand. Current large language models with hundreds of billions of parameters may be succeeded by models with trillions of parameters, each requiring proportionally more computational resources. Planning for these scaling trajectories ensures that power infrastructure investments remain relevant as AI capabilities advance.

How Do Hardware Efficiency Improvements Impact Power Planning?

Efficiency improvements in AI hardware provide some offset to growing computational requirements. New generations of AI accelerators deliver better performance per watt, while software optimizations can reduce the power required for specific tasks. However, these efficiency gains are typically overwhelmed by the growth in AI application scope and scale.

Renewable Energy Technology Evolution

Expanding renewable energy technology creates opportunities for more sustainable and cost-effective AI power strategies. Advances in battery storage, solar panel efficiency, and grid integration make renewable-powered AI infrastructure increasingly viable. Organizations that incorporate these technologies early can achieve cost and sustainability advantages.

How Do Grid Modernization Trends Affect Power Strategies?

Grid modernization trends affect the viability of different power strategies. Smart grid technologies enable more dynamic power management and potentially lower costs for flexible loads. Vehicle-to-grid integration and distributed energy resources create new options for power procurement and management.

What Regulatory Changes Should Organizations Anticipate?

Regulatory trends toward sustainability and carbon reduction will likely impose additional requirements on large electricity consumers. Organizations with proactive sustainability strategies may gain competitive advantages as these requirements tighten. Early investment in renewable energy and efficient infrastructure positions organizations favorably for future regulatory developments.

New AI deployment models may change power requirements in unexpected ways. Edge AI implementations could distribute some computational load away from centralized data centers. Federated learning approaches might reduce the power concentration at individual facilities. However, these distributed approaches often increase overall power requirements even as they change geographic distribution patterns.

Financial strategies must balance the capital intensity of power infrastructure with the evolution of AI technology. Flexible procurement approaches, partnership models, and infrastructure-as-a-service options provide alternatives to large upfront capital investments while maintaining access to cutting-edge power capabilities.

Take Action on Your AI Infrastructure Power Needs

The window for strategic AI power planning is narrowing as infrastructure constraints tighten and competition intensifies for available capacity. Organizations that delay power strategy development risk finding themselves unable to deploy AI capabilities when business needs demand them.

What Should Your Initial Power Assessment Include?

The first step involves conducting a comprehensive assessment of both current and projected AI power requirements. This assessment should consider planned AI initiatives, anticipated scaling requirements, and the power implications of different deployment models. Understanding the full scope of AI electricity demand enables more accurate capacity planning and cost forecasting.

How Do You Approach Strategic Site Selection?

Site selection decisions must prioritize power availability and grid capacity over traditional location factors. Organizations should evaluate multiple potential locations based on electrical infrastructure, renewable energy access, regulatory environment, and expansion potential. This evaluation often reveals opportunities in emerging markets that offer better conditions than established technology hubs.

What Power Procurement Strategies Work Best?

Power procurement strategies require long-term thinking and sophisticated contract structures. Direct power purchase agreements can provide cost predictability while supporting renewable energy development. Utility partnerships can accelerate grid connection timelines while ensuring adequate capacity for future expansion. Multiple procurement approaches provide risk mitigation and optimization opportunities.

When Should You Consider Infrastructure Partnerships?

Infrastructure partnerships offer alternatives to developing power capabilities internally. Experienced energy developers bring expertise in grid interconnection, regulatory navigation, and sustainable energy integration. These partnerships can accelerate deployment timelines while reducing the complexity and risk associated with large-scale power infrastructure development.

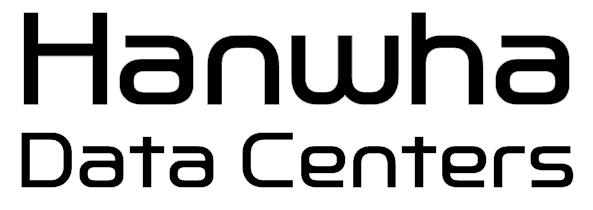

Hanwha Data Centers specializes in developing comprehensive energy solutions for power-intensive digital infrastructure, combining renewable generation with advanced storage systems to deliver reliable, sustainable power for next-generation AI workloads. Our integrated approach addresses the full spectrum of AI power requirements, from site identification and power procurement to comprehensive energy campus development at gigawatt scale. Contact our team to explore how we can support your organization’s AI infrastructure power needs with customized solutions designed for the scale and reliability that modern AI demands.