Key Takeaways

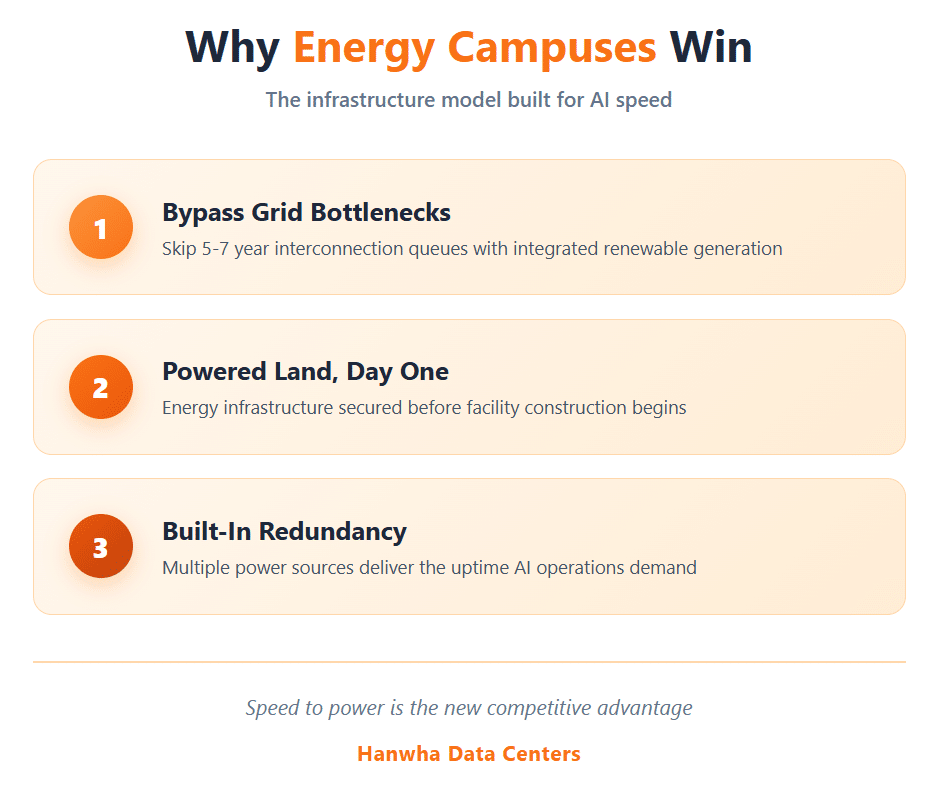

Energy campuses represent the infrastructure model built to deliver AI data center energy at the scale and speed hyperscalers require.

- Grid interconnection delays now stretch 5-7 years in major markets, making traditional power procurement strategies obsolete for AI deployment timelines

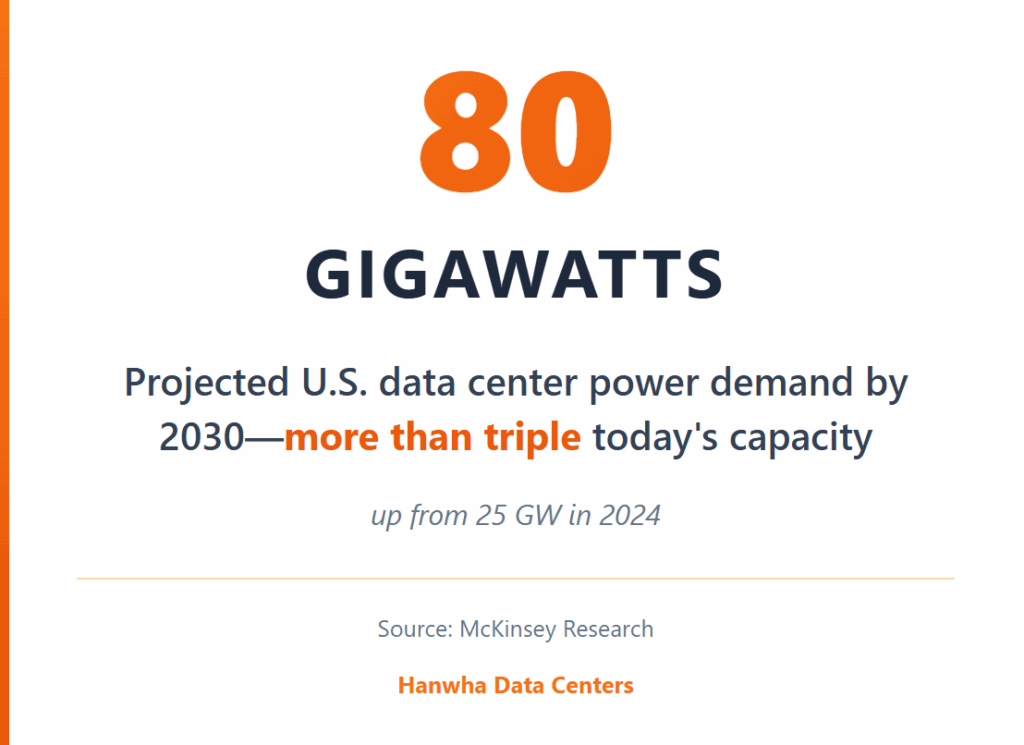

- U.S. data center power demand is projected to exceed 80 GW by 2030, with AI workloads driving the majority of this growth

- Energy campus development combines powered land, renewable generation, and strategic grid connections to bypass traditional infrastructure bottlenecks

- Organizations that secure integrated energy infrastructure gain decisive competitive advantages in AI deployment speed and operational resilience

The companies winning the AI infrastructure race are those solving power delivery first, not facility construction.

Why Is AI Data Center Energy Driving a Complete Infrastructure Rethink?

The compute demands of artificial intelligence have fundamentally broken traditional approaches to powering data center facilities. The energy requirements powering AI data centers now exceed what existing grids can deliver, and a single training cluster consumes more electricity than a small city.

According to Deloitte’s 2025 AI Infrastructure Survey, power demand from AI data centers in the United States could grow more than thirtyfold by 2035, reaching 123 gigawatts compared to just 4 gigawatts in 2024. AI workloads consume significantly more energy per square foot than traditional computing operations. Modern GPU racks routinely draw 40-80 kilowatts compared to 5-15 kilowatts for conventional server configurations, with next-generation systems pushing toward 130 kilowatts or higher.

The challenge extends beyond raw megawatt requirements. These AI data center energy demands concentrate in geographic clusters where land, fiber, and workforce availability intersect—locations that rarely align with existing transmission capacity.

What Makes Grid Interconnection the Primary Bottleneck for AI Infrastructure?

Power availability has replaced land acquisition as the determining factor in AI data center site selection. While suitable acreage exists across multiple regions, the transmission infrastructure to serve gigawatt-scale loads does not.

CenterPoint Energy in Texas reported a 700% increase in large load interconnection requests between late 2023 and late 2024, growing from 1 GW to 8 GW. Similar patterns appear across every major grid region. According to McKinsey research, U.S. data center power demand is projected to exceed 80 GW by 2030, up from 25 GW in 2024. Utilities are no longer treating grid connections as administrative formalities. Approvals increasingly require infrastructure that must be designed, financed, and built before operations can begin.

| Grid Region | Interconnection Queue Status | Typical Wait Time* |

| Northern Virginia (PJM) | Severely constrained | 5-7 years |

| ERCOT (Texas) | High demand, limited capacity | 3-5 years |

| MISO (Midwest) | Growing congestion | 4-6 years |

| CAISO (California) | Study backlog | 4-7 years |

*Industry estimates based on current queue conditions

The practical implication is straightforward: organizations seeking to deploy AI-ready infrastructure cannot rely on conventional grid connection timelines. As Engineering News-Record reports, data center projects that once hinged primarily on land availability are now constrained by whether utilities can deliver power without compromising system reliability. By the time traditional utility processes deliver power, competitive windows close. Market positions shift. First-mover advantages evaporate.

How Do Energy Campuses Solve the Power Delivery Problem?

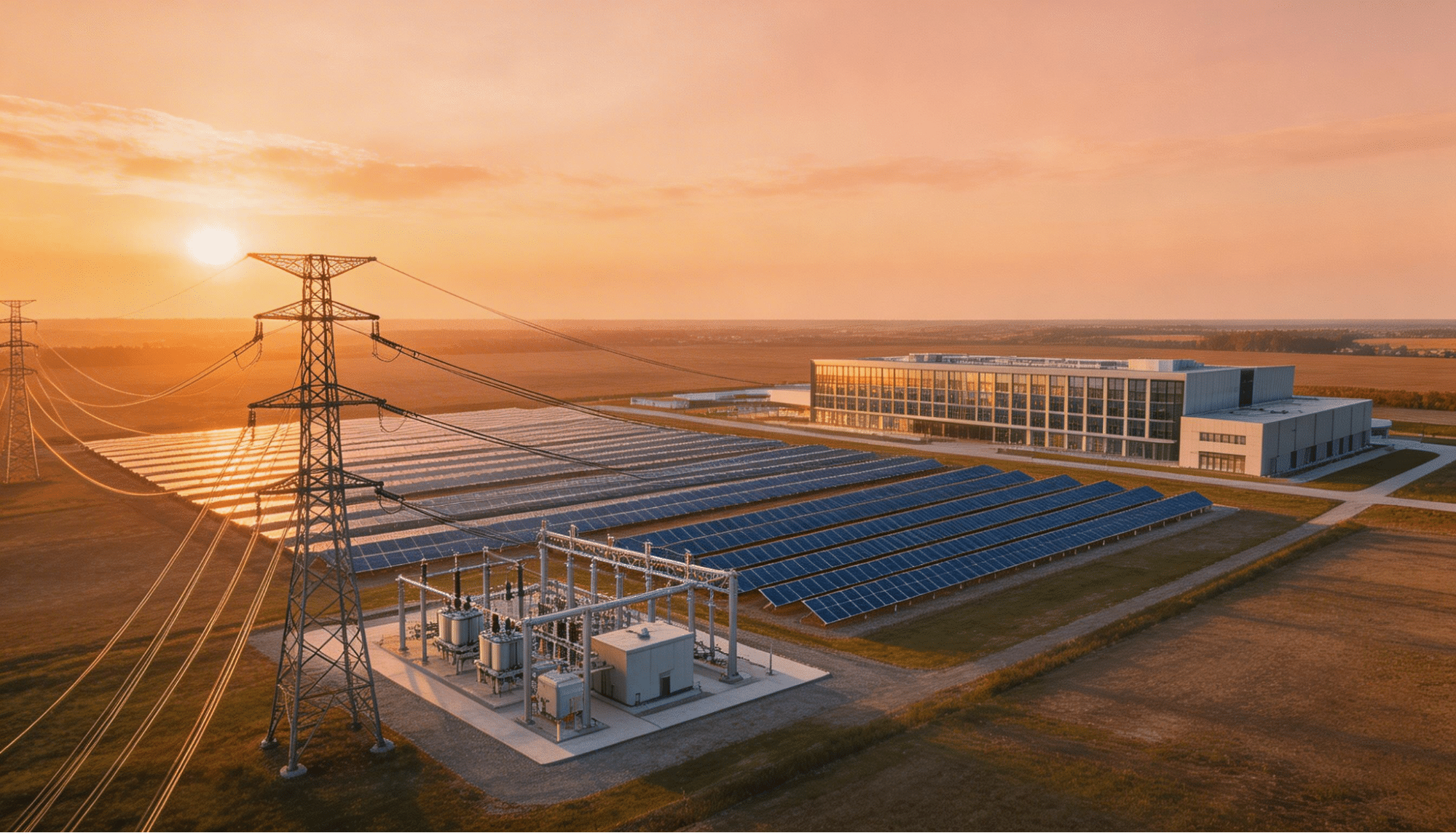

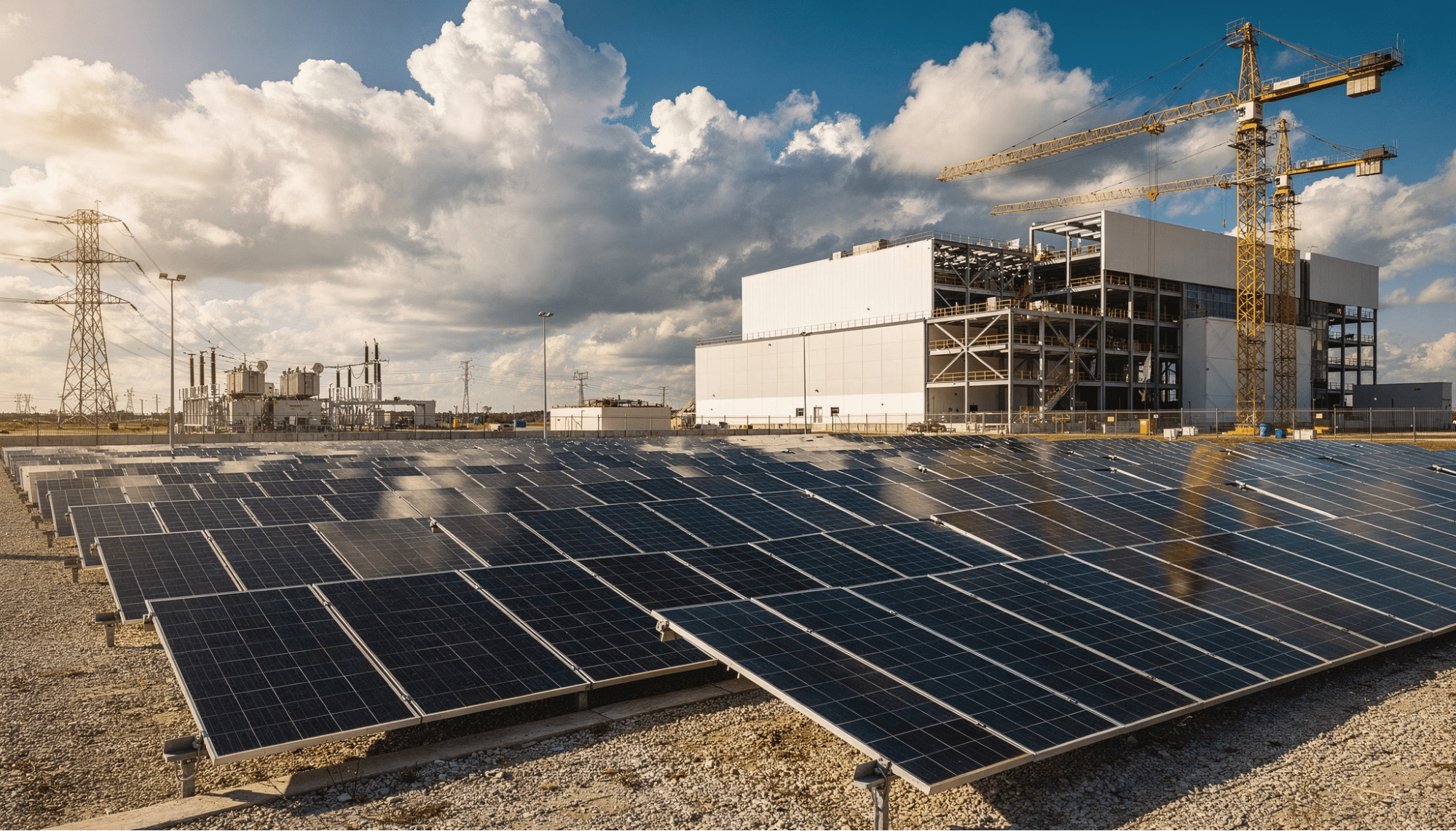

Energy campus development represents a fundamentally different approach to solving the AI data center energy challenge. Rather than acquiring land and then waiting years for grid connections, this model integrates power infrastructure into site development from day one.

The energy campus concept starts with strategic land acquisition in locations where grid proximity, renewable resources, and development feasibility intersect. The development process then adds power infrastructure before facility construction begins. This approach delivers what the market calls “powered land,” sites where energy availability is secured rather than pending.

What Infrastructure Components Define an Energy Campus?

Successful energy campus development requires coordinated execution across multiple infrastructure systems. Each component must align with the others to deliver the energy redundancy and scalability AI operations demand.

Grid interconnection planning begins during initial site selection. Rather than treating utility connections as afterthoughts, energy campus developers assess transmission capacity, substation headroom, and upgrade requirements before land acquisition closes. This front-loaded analysis identifies sites where power delivery timelines align with deployment schedules.

Renewable generation integration addresses both sustainability requirements and practical power delivery challenges. On-site solar installations and wind purchase agreements provide capacity that bypasses congested transmission corridors. Energy storage systems buffer intermittent generation against continuous high-density compute requirements. The combination delivers reliable power without waiting for grid upgrades that may take a decade to complete.

Water infrastructure planning ensures cooling capacity scales alongside power delivery. Modern AI facilities require significant water resources for thermal management, including liquid cooling systems for high-density GPU clusters. Sites lacking adequate water access or wastewater capacity cannot support the cooling loads AI operations generate, regardless of electrical availability.

What Role Does Speed Play in AI Infrastructure Development?

The AI infrastructure market rewards rapid deployment above almost every other consideration. Organizations that secure operational capacity sooner gain access to customers, revenue, and market position that later entrants cannot recapture.

Traditional data center development timelines measured success in quarters. AI infrastructure development measures success in weeks. The difference reflects both the pace of AI market evolution and the capital intensity of deployments. Every month of delay represents both lost revenue opportunity and continued burn of development capital.

Energy campus models compress timelines by addressing power delivery in parallel with facility development. While conventional approaches sequence these activities, with land acquisition followed by utility negotiations followed by interconnection studies followed by construction, energy campus development executes multiple workstreams simultaneously.

| Development Approach | Typical Timeline to Power | AI Market Fit |

| Traditional Grid Connection | 5-8 years | Poor |

| Behind-the-Meter Generation | 2-3 years | Moderate |

| Energy Campus (Powered Land) | Accelerated delivery | Strong |

The timeline advantages compound across the development cycle. Sites with secured power attract hyperscaler interest that raw land cannot. This interest accelerates lease negotiations, tenant improvements, and operational readiness. Each phase moves faster because the fundamental power question is already resolved.

How Does Renewable Energy Accelerate AI Data Center Energy Delivery?

Renewable energy has evolved from an environmental preference to a practical necessity for AI infrastructure. Solar and battery storage systems deploy faster than grid upgrades. They operate independently of congested transmission corridors. And they meet the sustainability commitments that major technology companies have made central to their infrastructure strategies.

Microsoft, Google, Amazon, and Meta have all committed to aggressive decarbonization targets. These commitments translate directly into procurement requirements. Hyperscalers increasingly require renewable energy availability as a condition of site selection, not as an optional amenity.

The practical advantages extend beyond sustainability positioning. Energy companies powering AI infrastructure report that solar and battery deployments can proceed while grid interconnection processes continue. This parallel development path allows facilities to begin operations sooner, even if full utility service arrives later.

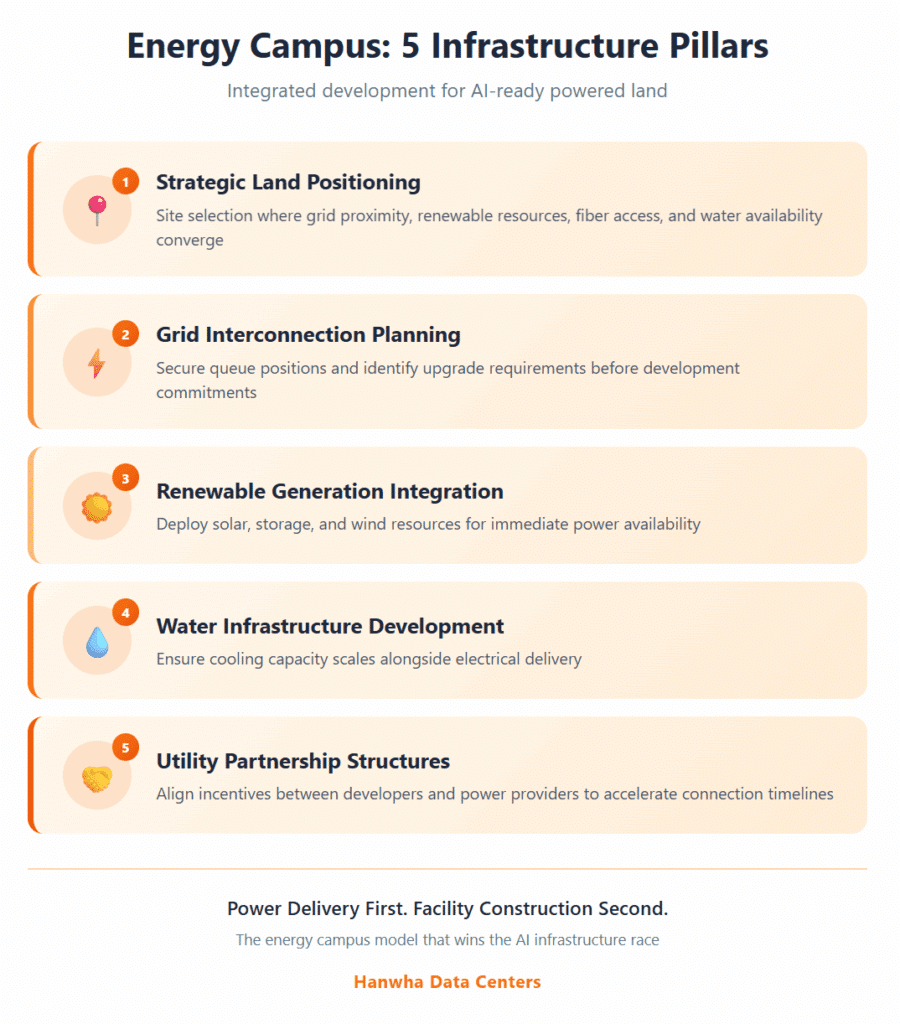

Five Critical Components of Energy Campus Infrastructure

Energy campus development succeeds when it addresses the full spectrum of infrastructure requirements AI operations demand:

- Strategic land positioning selects sites where grid proximity, renewable resources, fiber access, and water availability converge

- Grid interconnection planning secures queue positions and identifies upgrade requirements before development commitments

- Renewable generation integration deploys solar, storage, and potentially wind resources to provide immediate power availability

- Water infrastructure development ensures cooling capacity scales alongside electrical delivery

- Utility partnership structures align incentives between developers and power providers to accelerate connection timelines

Each component reinforces the others. Sites with strong renewable resources reduce grid dependency. Locations with available transmission capacity accelerate interconnection timelines. Properties with adequate water access support the thermal management systems high-density AI workloads require.

What Does Energy Redundancy Mean for AI Operations?

AI workloads cannot tolerate power interruptions. Training runs that execute for weeks require continuous availability. Inference operations that serve real-time applications demand immediate failover when primary sources falter. The stakes of downtime extend beyond revenue loss to competitive positioning and customer trust.

Energy campus architecture addresses redundancy through multiple layers of protection. Grid connections provide baseline capacity with utility-scale reliability. On-site generation offers independence from transmission disruptions. Battery storage bridges gaps between source transitions. The combination delivers uptime guarantees that single-source configurations cannot match.

This multi-source approach also provides operational flexibility that hyperscale data center operations increasingly require. Facilities can shift between power sources based on cost, availability, or carbon intensity. Critical inference operations maintain priority access to the most reliable sources regardless of conditions.

How Should Organizations Evaluate Energy Campus Opportunities?

Decision-makers evaluating AI infrastructure options face a fundamental choice: pursue conventional development paths with predictable delays, or embrace energy campus models that front-load power delivery.

The evaluation process should prioritize sites where power availability is demonstrated rather than projected. Locations with secured grid capacity, permitted renewable installations, and operational utility relationships present lower execution risk than sites requiring years of interconnection work.

Financial analysis must account for time value in AI markets. Facilities that achieve operational status sooner capture revenue streams that delayed projects cannot.

Due diligence should examine the full infrastructure stack. Power availability matters only when accompanied by adequate cooling capacity, fiber connectivity, and proper zoning.

What Trends Will Shape This Market Through 2030?

The infrastructure patterns emerging today will define competitive positioning for the remainder of the decade. Organizations that secure energy campus positions now establish foundations that cannot be easily replicated by later entrants.

Grid constraints will intensify before they ease. Transmission development timelines extend far longer than AI market cycles. The sites offering favorable grid access today represent scarce assets whose value increases as competing demand grows.

Renewable energy integration will shift from differentiator to requirement. Hyperscaler procurement standards increasingly mandate renewable power for AI facilities. Sites lacking renewable availability will face shrinking pools of potential tenants.

Technology evolution will continue increasing power density requirements. Current GPU generations already stress infrastructure designed for traditional workloads, and future processor roadmaps project continued density increases.

Frequently Asked Questions

What is an energy campus for AI data centers?

An energy campus is an integrated development approach that combines strategic land acquisition, grid interconnection, renewable energy generation, water infrastructure, and utility planning into a unified site designed for AI workloads. Unlike traditional data center development that treats power as an afterthought, energy campuses deliver “powered land” where energy availability is secured before facility construction begins.

Why are grid interconnection delays affecting AI infrastructure deployment?

Grid operators across the United States face unprecedented demand for large load connections from AI data centers. Interconnection queues in major markets now extend 5-7 years as utilities study upgrade requirements and schedule construction. These delays reflect both surging demand and decades of underinvestment in transmission infrastructure.

How does renewable energy enable faster AI data center power delivery?

Solar installations and battery storage systems can deploy significantly faster than grid transmission upgrades. They operate independently of congested utility corridors and provide immediate capacity while traditional interconnection processes continue. This parallel development path allows AI facilities to begin operations sooner than grid-dependent approaches permit.

What power density do modern AI workloads require?

Current AI GPU racks typically draw 40-80 kilowatts per rack, compared to 5-15 kilowatts for traditional server configurations. Next-generation systems like NVIDIA’s GB200 push densities to 130 kilowatts or higher, with future roadmaps projecting rack densities of 250 kilowatts or more within the next few years.

Start Building Your AI Energy Infrastructure Strategy

The AI data center energy transformation is not a future challenge but a present reality. Organizations waiting for traditional infrastructure to catch up with AI demand will find themselves perpetually behind market leaders who prioritized power delivery from the start.

Energy campus development offers a path forward that aligns infrastructure timelines with business requirements. By integrating powered land, renewable generation, and strategic grid positioning, this approach delivers the foundation AI operations require.

Hanwha Data Centers specializes in energy campus development that accelerates AI infrastructure deployment. With the backing of a Fortune Global 500 company and deep expertise in renewable energy integration, we deliver powered land solutions designed for the demands of high-density compute. Contact our team to explore how energy campus infrastructure can support your AI ambitions.