Key Takeaways

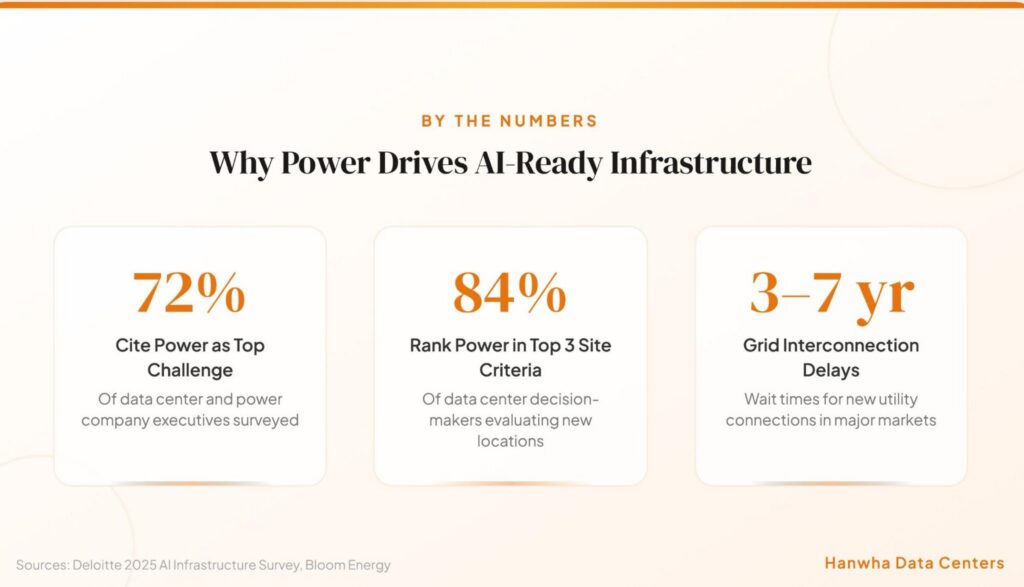

Power availability has replaced location as the primary constraint for AI-ready infrastructure, with 72% of industry executives calling grid capacity their top challenge.

- AI data center power demand could grow more than thirtyfold by 2035, making early power planning the single most important decision in any buildout.

- Grid interconnection delays of three to seven years in major markets are forcing CTOs to rethink traditional procurement and pursue alternative energy strategies.

- Integrated energy campus development, combining land, power generation, and grid access from day one, offers the fastest and most reliable path to operational AI facilities.

- Organizations that secure power infrastructure now will define competitive positioning in the AI economy; those that wait face increasingly constrained options.

If you’re a CTO planning AI capacity, your first hire isn’t an engineer. It’s an energy strategist.

Power used to be an afterthought in data center planning. You picked your location based on fiber connectivity and real estate costs, filed a utility request, and moved on. That era is over. A recent industry survey of 120 U.S. data center and power company executives found that 72% now consider power and grid capacity constraints “very or extremely challenging” for AI-ready infrastructure buildouts. AI workloads are consuming power at rates the traditional grid was never designed to handle.

For CTOs evaluating infrastructure for AI investments, power planning has become a core competency. The decisions you make around energy sourcing, site selection, and grid access will determine whether your AI capabilities launch on schedule or sit in a queue for years.

Why Does AI-Ready Infrastructure Demand a New Power Strategy?

Traditional data centers operated on a simple power model. You needed 10 to 15 kilowatts per rack, your local utility could provision it within a reasonable timeframe, and capacity planning was incremental. AI workloads have shattered every assumption underlying that model.

A single AI training rack can demand 50 to 150 kilowatts, with some next-generation GPU configurations pushing well beyond that. Multiply across hundreds or thousands of racks in a hyperscale facility, and you’re looking at power requirements rivaling small cities. Several hyperscalers have announced campus plans targeting 2 gigawatts or more of capacity, quadruple the size of the largest facilities currently operational.

What Makes AI Power Demands Different from Traditional Computing?

The distinction goes beyond raw wattage. AI workloads create continuous, high-density power demand that runs around the clock. Unlike traditional enterprise computing with fluctuating daily usage, model training and large-scale inference maintain near-peak consumption for weeks or months. This constant draw creates unique challenges for grid stability and energy procurement.

The power density issue compounds the total demand problem. When you pack GPU clusters drawing 80 kW or more per rack into a facility, standard power distribution architectures designed for 10 kW environments simply cannot keep up. You need electrical systems capable of handling startup surges, sustained loads, and rapid fluctuations without disrupting operations.

| Factor | Traditional Data Center | AI-Optimized Facility | Grid Impact | Energy Procurement |

| Power per rack | 10–15 kW | 50–150+ kW | Manageable with existing infrastructure | Standard utility contracts |

| Load profile | Variable, cyclical | Continuous, near-peak 24/7 | Requires dedicated upgrades | Hybrid strategies needed |

| Facility scale | 10–50 MW typical | 200 MW–2 GW planned | Major transmission work | On-site generation + grid |

How Is Grid Congestion Reshaping the AI Infrastructure Landscape?

Grid interconnection has become the single biggest bottleneck for AI-ready infrastructure deployment. In major data center markets, the wait time for new utility connections now stretches three to seven years. In power-constrained regions like Northern Virginia, some operators face even longer timelines when transmission upgrades are required.

The math is straightforward. A physical data center can be constructed in one to two years. But if the grid connection takes five to seven years, your facility sits idle while competitors with secured power capacity capture market share. This mismatch between construction speed and power delivery has fundamentally rewritten the site selection playbook.

What Should CTOs Prioritize When Planning Power for AI Data Centers?

Power planning for AI data centers requires a fundamentally different approach than traditional IT infrastructure procurement. CTOs need to think about energy the way they think about compute: as a strategic asset that determines competitive positioning.

Why Is Site Selection Now a Power Decision First?

The traditional site selection hierarchy prioritized proximity to population centers, fiber networks, and affordable real estate. Those factors still matter, but they’ve been subordinated to one overriding question: can you actually get power to this location, and how fast?

According to industry analysis, 84% of data center decision-makers now rank power availability among their top three site selection criteria. Powered land transactions in the 250 to 500 MW range have become the standard deal structure. The critical variable is no longer acreage or location but confirmed power availability and delivery timeline.

This shift has opened up secondary and tertiary markets that were previously overlooked. Regions in Texas, Ohio, and parts of the Southeast are attracting significant investment specifically because they offer faster paths to power. The CTO who fixates on a Tier 1 metro location while ignoring power timelines may end up years behind a competitor who chose a less prestigious site with reliable, fast energy access.

What Energy Mix Strategies Support AI Workloads?

No single power source meets every requirement of a large-scale AI deployment. The most effective strategies for AI-ready infrastructure combine multiple energy sources to balance reliability, scalability, and cost predictability.

Grid interconnection remains the foundation for most deployments, providing baseline capacity and the reliability that AI workloads demand. But relying exclusively on the grid exposes organizations to queue delays, rate volatility, and capacity constraints.

On-site generation through solar, battery storage, and natural gas provides supplemental capacity that can bridge grid delays and improve energy security. Renewable sources offer long-term cost predictability through power purchase agreements spanning 15 to 25 years, protecting against fossil fuel price volatility.

Battery energy storage systems play an increasingly critical role, storing excess energy during low-demand periods and discharging during peaks or grid outages. These systems respond to load fluctuations within milliseconds, making them well suited for high-density workloads that experience rapid power demand changes.

The key is matching the right energy approach to the specific requirements of each project. Some deployments benefit most from traditional grid interconnection, while others are better served by integrated energy campuses with on-site generation. The best strategy depends on location, timeline, capacity requirements, and long-term operational goals.

How Are Leading Organizations Solving the Power Challenge?

The organizations succeeding in AI data center deployment share a common trait: they treat power as a first-order strategic concern, not a downstream operational detail.

What Is the Energy Campus Model?

The energy campus concept represents a fundamental rethinking of how infrastructure for AI gets built. Rather than developing a data center and then trying to connect it to power, the campus model integrates land acquisition, power generation, energy storage, and grid interconnection into a single, coordinated development from day one.

This integrated approach addresses the core bottleneck by ensuring power infrastructure develops in parallel with computing infrastructure. Energy campuses typically co-locate renewable generation, battery storage, and grid connections on a single site, creating redundancy and flexibility that grid-only approaches cannot match. They also provide the scalability that AI operators need, because adding generation capacity on a campus you already control is significantly faster than negotiating new utility agreements or waiting in interconnection queues.

Five Critical Power Planning Steps for CTOs

CTOs building infrastructure to support high-density workloads need a structured approach to power planning that accounts for the unique demands and constraints of AI computing. The following steps provide a framework for making informed energy decisions.

- Audit your actual power requirements. Model your workload projections for the next five to ten years, accounting for growth well beyond current needs. AI power demand scales non-linearly, and underestimating future requirements is the most expensive mistake you can make.

- Evaluate grid capacity before committing to a site. Request detailed interconnection studies from local utilities before signing any real estate agreements. Understand the realistic timeline for power delivery, including any required transmission upgrades.

- Develop a hybrid energy strategy. Plan for a combination of grid power, on-site renewable generation, and energy storage from the start. This approach provides redundancy, faster deployment, and long-term cost predictability.

- Engage energy infrastructure partners early. The most successful deployments involve energy development partners from the earliest planning stages. Partners with land acquisition, permitting, and power development expertise can identify opportunities and accelerate timelines that pure IT organizations would miss.

- Build for flexibility. Design power infrastructure that can accommodate evolving AI hardware requirements. Next-generation chips may change power delivery characteristics, and your infrastructure needs the flexibility to adapt without major reconstruction.

What Are the Biggest Risks of Getting Power Planning Wrong?

The consequences of inadequate power planning extend far beyond project delays. They can fundamentally compromise an organization’s AI strategy.

How Do Delays Impact Competitive Positioning?

In AI, speed to deployment correlates directly with market advantage. Every month your infrastructure sits waiting for power is a month your competitors are training models, serving customers, and capturing data that improves their products. Early movers accumulate compounding advantages that become increasingly difficult to overcome.

| Risk Factor | Impact | Mitigation Strategy |

| Grid interconnection delays | 3–7 year timeline extensions | Evaluate alternative power strategies and secondary markets |

| Power capacity shortfall | Inability to scale AI operations | Plan for 2–3x current demand in capacity projections |

| Single-source energy dependency | Vulnerability to outages and rate increases | Develop hybrid energy mix with on-site generation |

| Regulatory changes | New compliance requirements and costs | Partner with experienced energy developers who track policy |

| Stranded infrastructure | Facilities built without adequate power | Conduct power feasibility assessments before site commitment |

Why Can’t You Afford to Wait?

The power availability landscape is tightening. As more organizations pursue AI capabilities, competition for available grid capacity intensifies. Utility queues grow longer. Favorable sites with fast power access get claimed. The federal government has recognized that electricity access has become a strategic constraint on national AI competitiveness, launching initiatives specifically aimed at accelerating power delivery for large-scale computing.

Organizations that secure reliable power infrastructure today position themselves to scale AI operations rapidly as opportunities emerge. Grid-connected capacity is expanding too slowly to match AI demand, making customer-sited energy resources and hybrid strategies essential for organizations that cannot afford to wait.

CTOs who move decisively on energy infrastructure planning will build the foundation for sustained competitive advantage. Those who delay will spend years trying to catch up.

FAQ

How much power does an AI data center need compared to a traditional facility?

AI data centers typically require 50 to 150 kilowatts per rack, compared to 10 to 15 kilowatts for traditional computing. At the facility level, large-scale AI deployments can demand 200 MW to 2 GW of total capacity, roughly 10 to 40 times more than conventional enterprise facilities.

Why are grid interconnection delays so long for AI data centers?

Utility transmission infrastructure was designed for gradual load growth, not sudden massive demand increases. Interconnection studies involve extensive engineering analysis, and many regions require significant transmission upgrades before new large-scale loads can connect. These factors combine to create three to seven year wait times in many markets.

What is a powered land transaction?

A powered land transaction involves acquiring land with confirmed power availability and a defined delivery timeline. Rather than purchasing raw land and separately pursuing utility connections, powered land bundles site acquisition with energy infrastructure, significantly reducing time to operation.

Can renewable energy reliably power AI workloads that run 24/7?

Yes, when properly designed. Hybrid renewable systems combining solar, wind, and battery storage can provide continuous clean power. These are often supplemented by grid connections and natural gas generation to ensure absolute reliability. The key is designing the energy mix to match the specific capacity and uptime requirements of each deployment.

Build Your AI-Ready Infrastructure on a Solid Power Foundation

At its core, building AI data centers is a power planning challenge. The compute, the networking, the software stack: all of it depends on having reliable, scalable, and cost-effective energy delivered where and when you need it. CTOs who recognize this reality and plan accordingly will lead the next wave of AI innovation.

Hanwha Data Centers specializes in developing the foundational energy infrastructure that makes AI-ready facilities possible, from strategic land acquisition and site development to power generation and grid interconnection. If you’re planning infrastructure for AI that needs to be operational fast, start a conversation with our team about building your power strategy from the ground up.