Key Takeaways

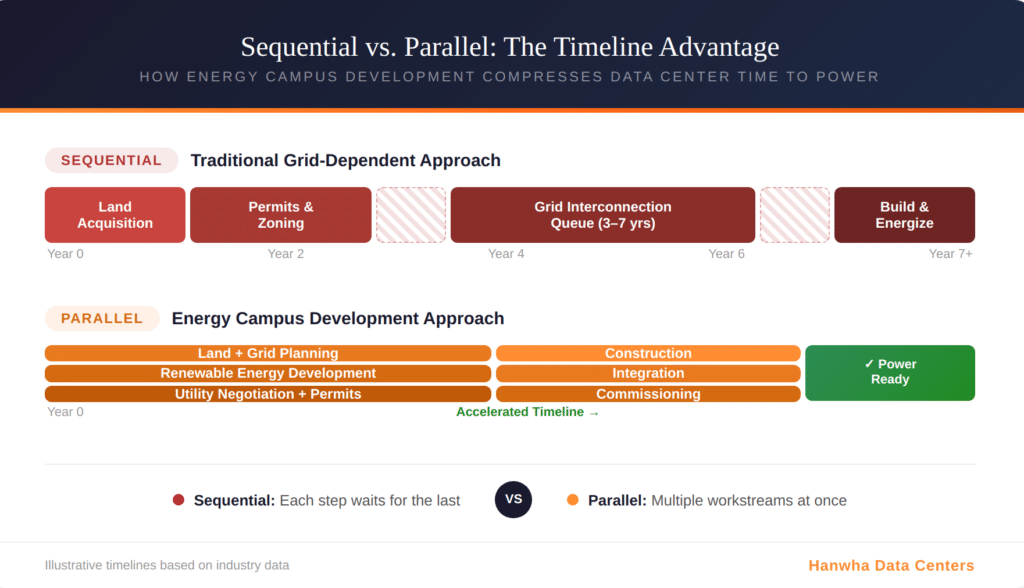

Energy campuses are compressing data center time to power from years to a fraction of traditional timelines, giving early movers a decisive edge in the AI infrastructure race.

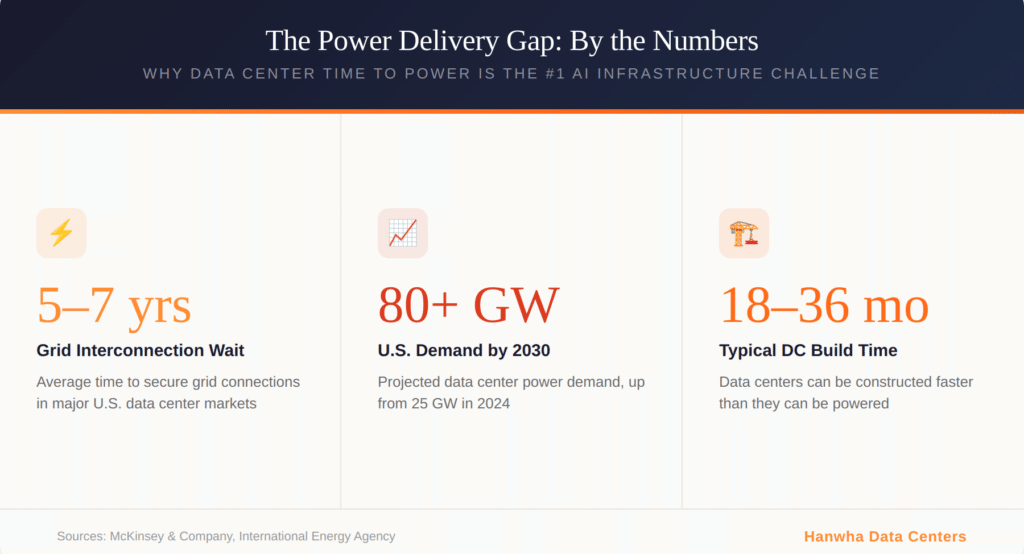

- Grid interconnection queues now stretch five to seven years in major markets, creating an urgent need for alternative power delivery strategies.

- Behind-the-meter generation and on-site renewables are enabling faster power readiness for AI-scale facilities.

- U.S. data center power demand is projected to grow from 25 GW in 2024 to over 80 GW by 2030, making speed a competitive necessity.

Organizations that treat power infrastructure as a first-move priority will define the next decade of AI competitive positioning.

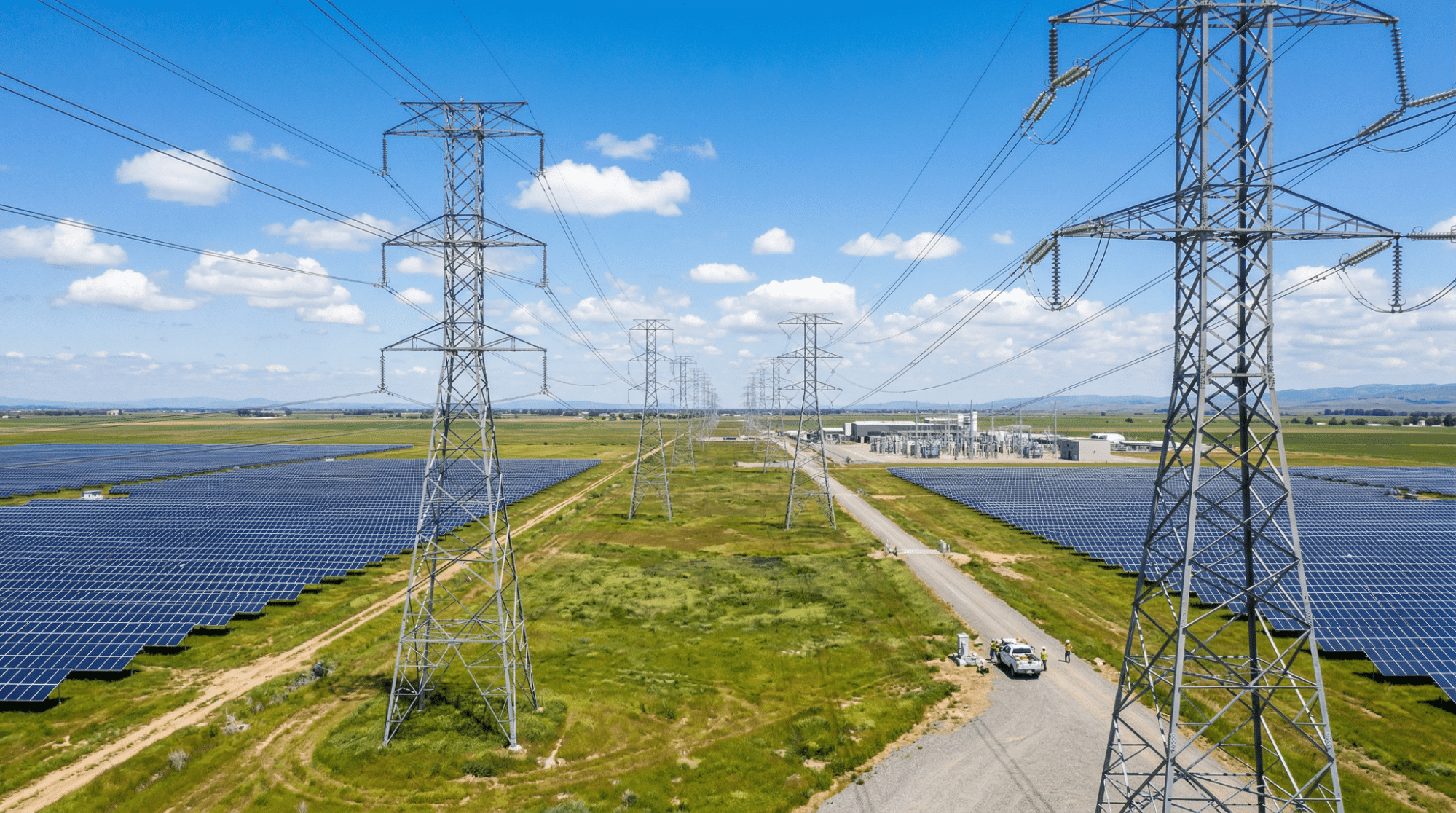

Every major AI initiative eventually hits the same wall: power. The compute hardware is available. The software frameworks are mature. The talent exists. But none of it matters without electricity delivered at scale, reliably, and on a timeline that matches business reality. According to McKinsey’s energy demand analysis, U.S. data center power demand is projected to surge from 25 GW in 2024 to more than 80 GW by 2030. That kind of growth doesn’t fit inside the existing grid’s ability to respond. The result? Data center time to power has become the single most important variable in AI infrastructure planning.

Traditional grid interconnection processes were designed for gradual, predictable load growth. They were never built to accommodate dozens of gigawatt-scale requests arriving simultaneously. The gap between when a facility is physically constructed and when it actually receives power has widened into a chasm that threatens AI deployment timelines across the industry.

Why Is Data Center Time to Power the Biggest Bottleneck in AI?

Power scarcity has moved from a secondary concern to the primary constraint limiting hyperscale growth. A few years ago, site selection centered on fiber connectivity, proximity to end users, and real estate costs. Today, the question that dominates every development conversation is simple: how fast can you get power to the site?

How Long Do Grid Interconnection Delays Actually Take?

In Northern Virginia, new facilities now face wait times of up to seven years for adequate grid connections. That’s up from a previous average of roughly four years. The problem isn’t unique to Virginia. Across every major ISO in the United States, interconnection approval timelines are stretching to five to seven years. CenterPoint Energy in Texas reported a 700% increase in large load requests between late 2023 and late 2024, jumping from 1 GW to 8 GW.

Data centers typically need to be built and operational within 18 to 36 months to meet market needs. Grid interconnection is taking three to five times longer than that. This mismatch between construction speed and power delivery speed is the defining infrastructure challenge of the AI era.

What Makes AI Power Demand Different?

Traditional data centers might draw 30 megawatts of continuous power. Modern AI training facilities require 200 megawatts or more, with individual GPU racks demanding 80 to 150 kilowatts compared to 15 to 20 kilowatts in conventional environments. The International Energy Agency reports that global data center electricity consumption is projected to double to approximately 945 terawatt-hours by 2030, growing around 15% per year. AI workloads are the primary driver, requiring sustained high-density power delivery that legacy grid infrastructure was never designed to support.

How Does the Energy Campus Model Reduce Time to Power?

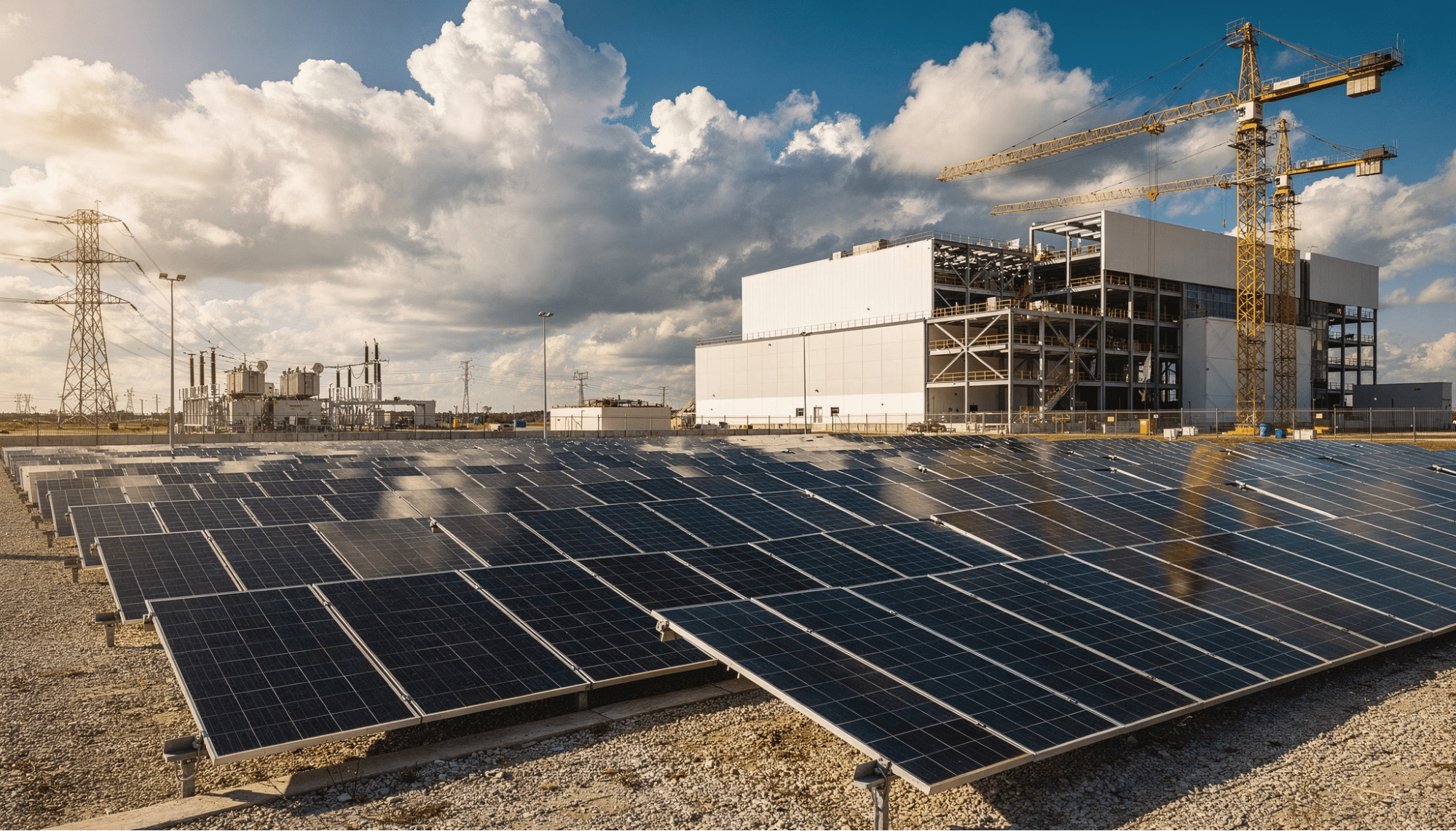

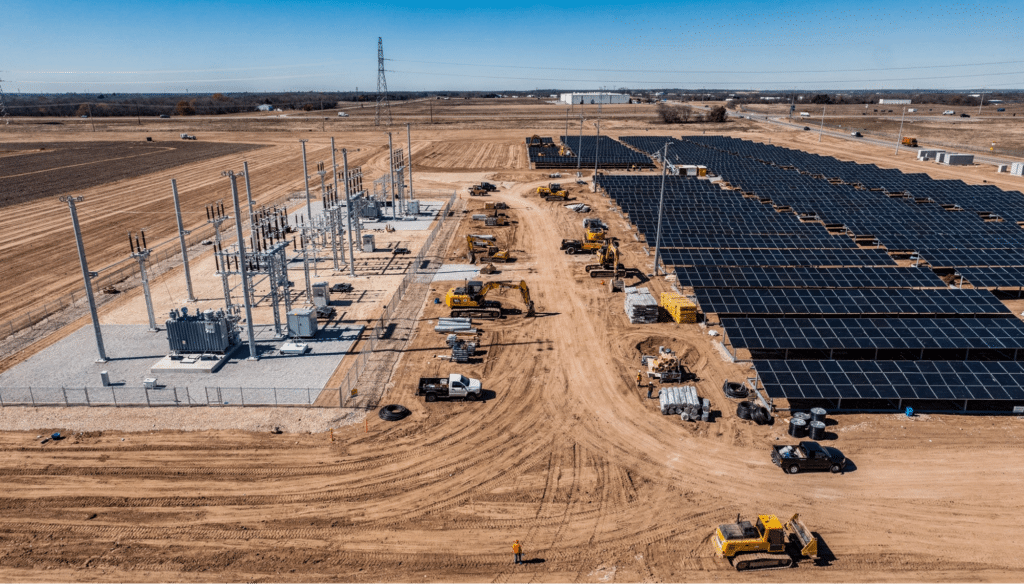

The energy campus model represents a fundamentally different approach to solving the power delivery challenge. Instead of acquiring land and then entering a years-long queue for grid access, this approach integrates power infrastructure into site development from the very beginning. The result is accelerated power readiness through parallel workflows that compress what used to be sequential processes. Both grid interconnection and energy campus strategies play important roles depending on market conditions, timeline requirements, and project scale.

Matching Power Delivery Strategy to Project Requirements

| Project Factor | Grid Interconnection Strengths | Energy Campus Strengths |

| Timeline Sensitivity | Best when queue capacity is available in the target market | Best when deployment speed is the top priority |

| Power Scale | Ideal for projects that benefit from deep grid capacity at scale | Ideal for phased buildouts that scale with demand |

| Location Flexibility | Suited to markets with established utility infrastructure | Opens secondary and emerging markets with renewable resources |

| Energy Strategy | Leverages existing generation and transmission assets | Integrates on-site renewables with grid connections |

| Optimal Use Case | Regions with available capacity and efficient utility processes | Markets with constrained queues or where parallel workflows accelerate delivery |

What Does Parallel Development Look Like?

Traditional development follows a rigid, sequential process. You acquire land. You negotiate with the utility. You enter the interconnection queue. You wait. Then you build. Each step depends on the one before it, and a delay anywhere pushes the entire project back.

Energy campus development flips this by executing multiple workstreams simultaneously. Grid interconnection planning begins during initial site selection, not after it. Renewable generation capacity develops in parallel with facility design. Utility relationships are established before land acquisition closes, ensuring power delivery timelines are understood from day one. This parallel approach doesn’t eliminate grid timelines entirely, but it dramatically reduces the gap between breaking ground and receiving power.

What Strategies Are Driving Fast Infrastructure Deployment?

Several converging strategies enable organizations to move from site acquisition to operational power more quickly. Each addresses a different dimension of the power delivery challenge.

Behind-the-Meter Generation

Behind-the-meter (BTM) generation allows operators to produce power on-site, reducing or eliminating dependence on congested grid infrastructure. BTM systems, whether solar, fuel cells, or battery storage, can begin delivering power while longer-term grid interconnection processes move forward in the background. This hybrid approach addresses the fundamental mismatch between data center construction speed and grid delivery speed.

Strategic Site Selection for Power Readiness

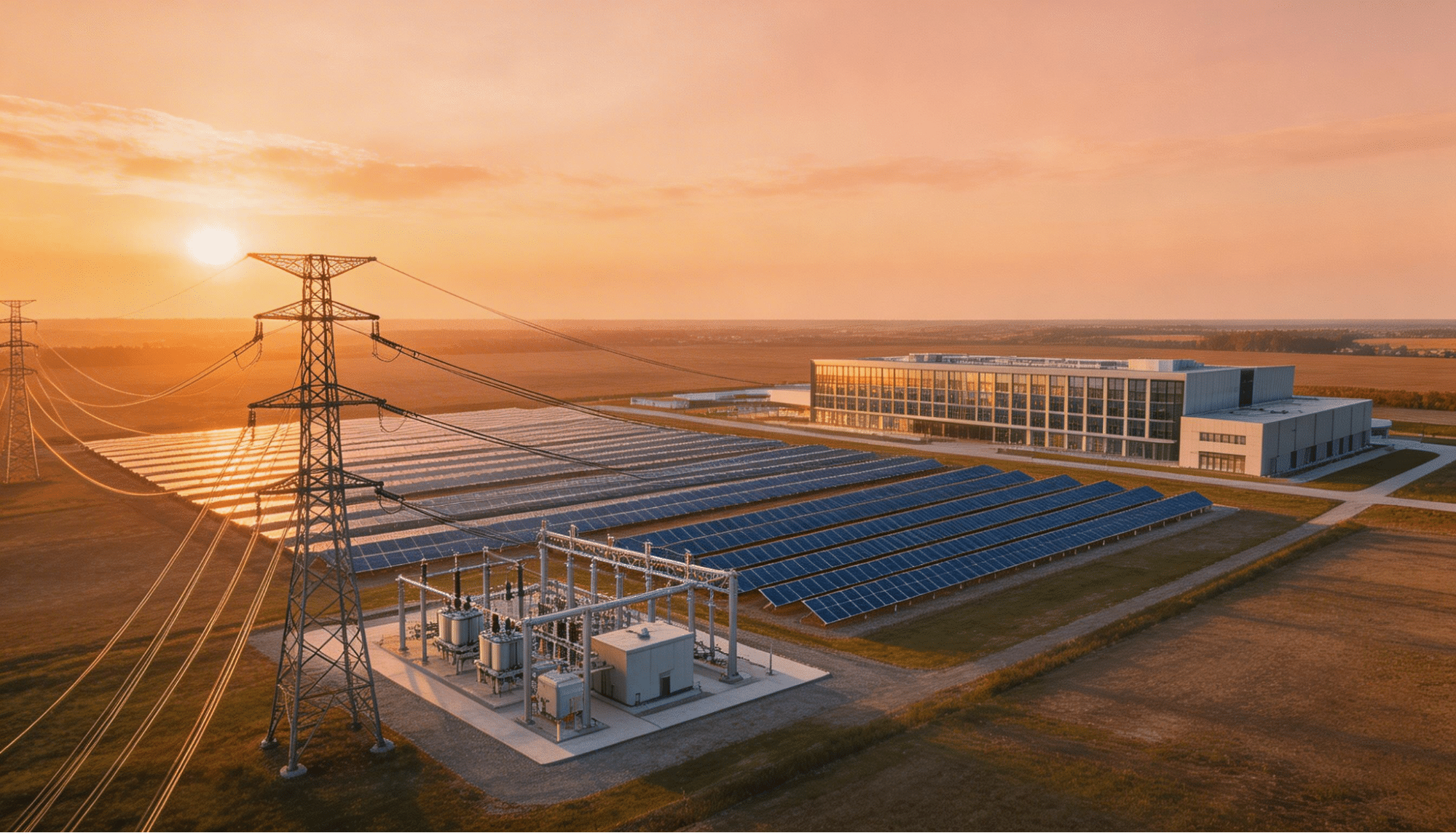

The most important decision in reducing time to power happens before a single shovel hits the ground. Power availability now drives site selection more than any other factor. Sites are evaluated based on existing transmission capacity, substation headroom, renewable resource availability, and the speed at which local utilities can process requests. Powered land, where energy infrastructure is already developed, commands significant premiums precisely because it compresses the path to operations.

The World Resources Institute’s analysis of data center electricity demand confirms that regional variations in grid capacity create dramatically different power delivery timelines. Developers who understand these dynamics can select sites where timelines align with their deployment schedules.

5 Key Elements of an AI Data Center Buildout Designed for Speed

Successfully compressing power delivery timelines requires coordinated execution across several infrastructure domains.

1. Pre-Secured Grid Interconnection Agreements

Sites with existing or in-progress interconnection agreements eliminate the most time-consuming variable in the development process. Developers who begin grid negotiations during land acquisition can align construction schedules with utility timelines rather than adapting reactively.

2. On-Site Renewable Energy Generation

Solar arrays and battery storage systems can be deployed faster than grid upgrades in many markets. When integrated into the campus design from the outset, renewable energy provides both interim and long-term power capacity, addressing sustainability goals while improving deployment timelines.

3. Modular, Phased Capacity Planning

Rather than building for peak capacity from day one, effective strategies deploy power infrastructure in phases. Initial capacity supports first-wave operations, with subsequent phases scaling alongside demand. This avoids the capital overcommitment and delays associated with delivering maximum capacity at launch.

4. Utility Relationship Development

The speed at which a local utility processes interconnection requests varies enormously by region. Developers with established partnerships and transparent load forecasts can navigate interconnection processes more efficiently than new entrants requesting unprecedented capacity.

5. Integrated Water, Fiber, and Utility Planning

Power is the dominant constraint, but fast infrastructure deployment requires that water supply, fiber connectivity, and zoning approvals move in parallel with energy development. Any single infrastructure gap can delay an otherwise power-ready site.

How Do Regional Grid Conditions Affect Power Delivery Speed?

Regional grid conditions, utility processes, and existing infrastructure create vastly different timelines for powering a new data center. Understanding these regional dynamics is essential for any organization pursuing AI workloads.

Regional Grid Interconnection Conditions for Data Centers

| Region | Queue Backlog | Key Challenge | Opportunity |

| Northern Virginia (PJM) | Severe; up to 7 years | Grid saturation, transmission limits | Brownfield retrofits, BTM power |

| Texas (ERCOT) | 137+ GW pending requests | Volume overwhelms processing | Strong renewables, retail energy |

| Southeast U.S. | Moderate but growing | Limited DC infrastructure | Abundant solar, available land |

| Midwest | Moderate | Transmission gaps | Strong wind, lower land costs |

Each region presents a different equation for power delivery speed. Markets with severe backlogs push operators toward on-site generation and campus-based energy strategies that can bypass traditional grid timelines.

What Role Does Renewable Energy Play in Reducing Data Center Time to Power?

Renewable energy has evolved from an environmental preference to a practical deployment accelerator. Solar and battery storage systems can often be permitted, constructed, and energized faster than the transmission upgrades required for traditional grid connections. This speed advantage is transforming how developers think about renewable integration, shifting it from a sustainability checkbox to a core component of their deployment strategy.

On-site solar paired with battery energy storage provides multiple advantages. It delivers interim power capacity while grid connections are finalized. It reduces the total load that must be sourced from the grid, potentially simplifying interconnection requirements. And it provides long-term price stability that protects against wholesale energy cost volatility. Industry project data shows that solar and storage installations can typically reach commercial operation within 18 to 24 months, compared to five or more years for grid infrastructure upgrades. Hybrid power models combining grid connections with on-site generation are becoming the standard for large-scale AI facilities.

Frequently Asked Questions

What is data center time to power?

Data center time to power refers to the duration between when a site is identified or construction begins and when the facility actually receives operational electricity. This includes grid interconnection approval, transmission upgrades, substation construction, and final energization. In many U.S. markets, this process now takes three to seven years through traditional grid pathways.

Why are grid interconnection queues so long?

The surge in AI-driven demand has created unprecedented volumes of large-load interconnection requests. Many grids lack the transmission capacity to support dozens of gigawatt-scale facilities. Equipment supply chains for transformers and switchgear face multi-year backlogs. And engineering studies have grown more complex as grids become more congested.

How does energy campus development reduce time to power?

The campus-based approach compresses timelines by executing land acquisition, power infrastructure, utility negotiations, and renewable deployment in parallel rather than sequentially. By integrating on-site generation and securing interconnection agreements early, these campuses achieve operational readiness significantly faster than traditional approaches.

Can renewable energy alone power an AI data center?

Most large-scale AI facilities use hybrid power models combining renewable generation with grid connections and energy storage. Solar and battery systems provide substantial capacity and deploy more quickly than grid upgrades, but 24/7 reliability for mission-critical workloads typically requires a diversified energy portfolio.

Start Building Your Power Advantage Today

The organizations that will lead the AI economy over the next decade are making power infrastructure decisions right now. Every month spent in a grid interconnection queue is a month that competitors are deploying capacity and capturing market share. The shift toward campus-based development, powered land strategies, and parallel infrastructure workflows represents a permanent change in how AI data center buildout gets done.

Hanwha Data Centers specializes in developing energy campus infrastructure that delivers powered land solutions designed for the unique demands of AI deployments. To explore how integrated energy infrastructure can accelerate your path to power, connect with the team today.