Key Takeaways

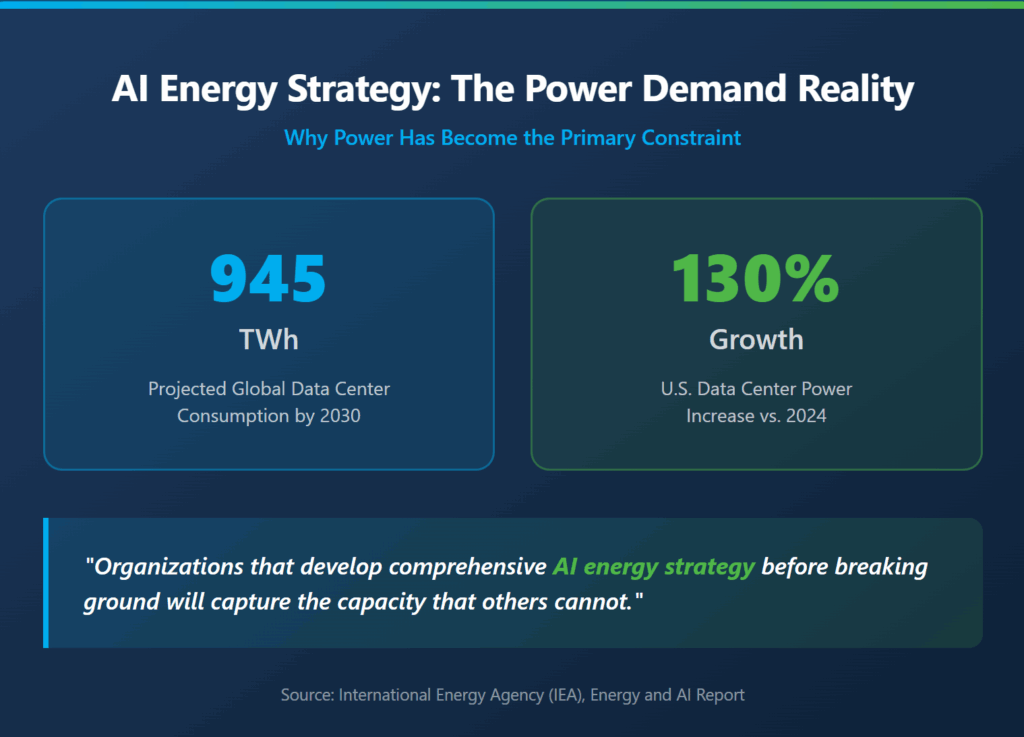

Power availability now determines AI deployment timelines more than any other factor.

- Global data center electricity consumption is projected to double to 945 TWh by 2030, with AI workloads accounting for nearly half of all net new demand

- Interconnection delays of five or more years are derailing projects that fail to secure power access during site selection

- Geographic diversification and renewable energy integration enable faster deployment by bypassing traditional grid bottlenecks

- Operators who treat energy infrastructure as foundational rather than operational gain significant competitive advantages

Organizations that develop comprehensive AI energy strategy before breaking ground will capture the capacity that others cannot.

Why Has AI Energy Strategy Become the Primary Success Factor?

The constraint that matters most for hyperscale AI deployment has shifted. Land is available. Capital is flowing. Chips are shipping. Power, however, remains stubbornly difficult to secure at the scale modern AI operations demand.

According to the International Energy Agency, data center electricity consumption grew at 12% annually over the past five years, with AI accelerating this trajectory dramatically. The agency projects global consumption will reach 945 TWh by 2030, representing nearly 3% of total worldwide electricity demand. In the United States alone, consumption is expected to increase by 130% compared to 2024 levels.

This fundamental shift explains why AI energy strategy has moved from an operational consideration to a foundational requirement. Operators who approach power as something to figure out after site selection routinely discover that their timelines extend by years and their budgets balloon by hundreds of millions of dollars.

The hyperscalers leading AI deployment have already recognized this reality. Their strategies now treat power sourcing, grid interconnection, and energy campus development as prerequisites rather than afterthoughts. For operators seeking to compete in this environment, understanding what they’re getting right offers a roadmap worth studying.

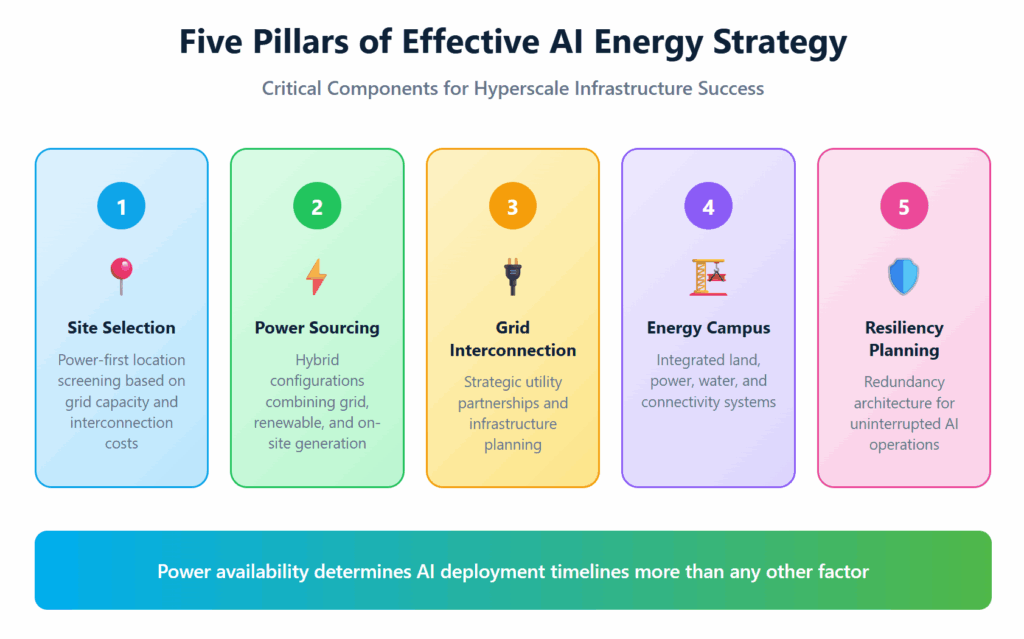

What Does an Effective AI Energy Strategy Actually Include?

An effective AI energy strategy addresses the complete chain of decisions from site identification through sustained operations. Each element influences the others, which is why piecemeal approaches consistently underperform integrated frameworks.

The foundation begins with power-first site selection. Rather than identifying attractive land and then investigating power availability, leading operators now screen locations based on grid capacity, interconnection timelines, and upgrade costs before evaluating any other factors. This inversion of traditional real estate development logic reflects the new reality that a site without accessible power holds no value regardless of its other attributes.

Beyond initial availability, a complete strategy must address long-term power sourcing, renewable energy integration, resiliency planning, and regulatory compliance. Each component requires specialized expertise and carries its own risk profile. Organizations attempting to manage these elements independently often discover costly gaps only after significant capital deployment.

| Strategic Component | Key Considerations | Timeline Impact |

| Site Selection | Grid capacity, upgrade costs, interconnection queue position | Determines project viability |

| Power Sourcing | Mix of grid, renewable, and behind-the-meter generation | Affects deployment speed |

| Grid Interconnection | Utility relationships, infrastructure requirements, permitting | Often the longest lead time |

| Energy Campus Planning | Land preparation, utility infrastructure, scalability | Enables phased deployment |

| Resiliency Planning | Redundancy architecture, backup systems, load management | Protects operational continuity |

How Are Grid Constraints Reshaping AI Data Center Design?

The traditional model of connecting data centers to existing grid infrastructure assumed that utilities could accommodate new large loads within reasonable timeframes. That assumption no longer holds for AI-scale operations.

Grid interconnection delays have extended to five years or more in many regions. According to analysis from McKinsey, U.S. data center power demand alone is expected to grow at 23% annually through 2030. Research indicates that speculative interconnection requests may exceed actual planned development by a factor of five to ten as operators compete for limited grid access. Utilities evaluating worst-case scenarios for their grids often quote infrastructure upgrade costs that fundamentally alter project economics.

These constraints are driving several adaptations in AI data center design and site strategy. Co-location arrangements, where data centers are built directly adjacent to existing generation sources, have emerged as one solution. This approach allows operators to utilize existing grid connection points while avoiding lengthy interconnection queues for new service.

Behind-the-meter generation represents another response. By developing on-site power generation capacity, operators can reduce their dependence on grid availability while still maintaining grid connection for backup or supplemental power. Renewable energy sources including solar arrays and battery storage systems increasingly feature in these configurations, providing both environmental benefits and deployment flexibility.

Geographic diversification has also accelerated. Rather than concentrating capacity in traditional data center markets like Northern Virginia, operators are expanding into secondary markets where power availability remains stronger and interconnection timelines shorter. This shift demands more sophisticated site selection capabilities but rewards operators with faster paths to operational capacity.

What Power Sourcing Approaches Work Best for AI Workloads?

AI workloads present unique power demands that influence optimal sourcing strategies. Training operations can run continuously for weeks or months, requiring sustained high-power delivery without interruption. Inference workloads cycle more dynamically but still demand consistent availability and rapid response to load changes.

These characteristics favor power sourcing approaches that emphasize reliability and predictability over pure cost optimization. While traditional data center operations might tolerate brief interruptions or demand response participation, AI training runs that lose power mid-cycle often must restart entirely, wasting significant computational investment.

The most effective approaches to AI energy strategy typically combine multiple sourcing methods into hybrid configurations. Grid power provides baseline capacity and serves as backup for on-site generation. Renewable sources like solar provide cost-effective power during generation windows while supporting sustainability commitments that hyperscalers increasingly require. Battery storage systems bridge gaps between generation and consumption while providing resilience against grid disruptions.

Power purchase agreements have become essential tools for securing long-term supply at predictable costs. These arrangements lock in pricing while providing utilities or independent generators with the demand certainty needed to justify new generation investments. Leading hyperscalers have signed agreements totaling multiple gigawatts of renewable capacity, fundamentally reshaping how clean energy projects get financed and built.

Five Critical Elements of Energy Campus Development

The energy campus model has emerged as the dominant framework for AI-scale deployments. Rather than developing individual facilities in isolation, this approach plans entire complexes designed to deliver power, water, connectivity, and scalability as integrated systems.

- Strategic Land Acquisition and Entitlement The foundation of any energy campus begins with securing land appropriate for data center development. This means not simply available acreage, but sites that can be entitled for the intended use, connected to necessary infrastructure, and scaled as demand grows. Zoning, environmental review, and community engagement processes can extend timelines significantly if not addressed proactively.

- Utility Infrastructure Development Beyond the data center footprint itself, energy campuses require substantial utility infrastructure. This includes not only electrical interconnection but also water supply and wastewater management, telecommunications connectivity, and access roads capable of handling heavy equipment during construction and maintenance.

- Scalable Power Architecture Effective energy campuses anticipate growth from initial deployment through full buildout. This requires power architecture designed for modular expansion, with backbone infrastructure sized for ultimate capacity even when initial phases utilize only a fraction of that potential. Retrofitting undersized infrastructure later proves far more expensive than building with scale in mind from the start.

- Renewable Integration Planning Incorporating renewable energy into an energy campus requires dedicated land allocation for solar arrays or wind installations, interconnection infrastructure to bring that generation to computing loads, and storage systems to manage the intermittency inherent in renewable sources. Planning these elements from the outset rather than adding them later significantly reduces costs and complexity.

- Water and Environmental Systems AI data centers require water for cooling systems, and responsible development demands sustainable water sourcing and treatment. Energy campus planning must address water availability, establish appropriate treatment and recycling systems, and ensure compliance with environmental regulations that govern discharge and consumption.

| Development Phase | Primary Focus Areas | Critical Success Factors |

| Site Selection | Power access, land availability, regulatory environment | Grid capacity analysis, interconnection cost assessment |

| Planning and Entitlement | Zoning approval, environmental review, community relations | Early engagement, comprehensive impact assessment |

| Infrastructure Development | Utility connections, access roads, backbone systems | Utility partnerships, long-lead equipment procurement |

| Construction | Building systems, power systems, connectivity | Phased delivery, quality control, timeline management |

| Operations | Power management, maintenance, scaling | Automated monitoring, preventive maintenance programs |

Why Does Resiliency Planning Matter More for AI Operations?

Resiliency planning has always mattered for data centers, but AI operations elevate its importance substantially. The power requirements for AI data centers create concentrated loads that demand more robust backup systems and redundancy architecture than traditional computing environments.

Consider the difference between a general-purpose data center losing power briefly and an AI training cluster experiencing the same interruption. The former might lose a few transactions or experience temporary latency; the latter might invalidate weeks of computational work worth millions of dollars. This asymmetry in consequences justifies substantially higher investment in resiliency systems.

Effective resiliency planning for AI operations addresses multiple failure modes. Grid outages require backup generation capable of carrying full AI loads, not just critical systems. Equipment failures demand redundancy at the component level and rapid replacement capabilities. Network disruptions need diverse connectivity paths and failover mechanisms that preserve data integrity during transitions.

The geographic concentration of data centers in certain regions introduces additional resiliency concerns. When a significant portion of national computing capacity resides in one metropolitan area, regional events can have outsized impacts. Diversifying deployment across multiple energy campuses in different geographic regions provides portfolio-level resiliency that single-site redundancy cannot match.

How Should Operators Approach Long-Term Planning?

Building an AI energy strategy for the long term requires looking beyond immediate deployment needs to anticipate how power requirements, technology, and markets will evolve over facility lifetimes measured in decades.

Energy solutions for AI data centers must account for continued growth in computational intensity. The chips powering AI workloads today consume significantly more power per unit than their predecessors, and this trajectory shows no sign of reversing. Facilities designed for current power densities may prove inadequate within years rather than decades.

Regulatory evolution represents another long-term consideration. Environmental requirements, grid participation mandates, and sustainability reporting obligations continue to expand. Strategies that optimize solely for today’s regulatory environment risk costly retrofits or compliance gaps as requirements evolve.

Finally, market dynamics around power sourcing continue to shift. The competition for renewable energy capacity, the emergence of new generation technologies, and the restructuring of utility markets all influence what power sourcing approaches will prove most advantageous over time. Operators who build flexibility into their strategies can adapt to these changes more readily than those locked into rigid configurations.

FAQ

What makes AI energy strategy different from traditional data center power planning? AI workloads demand higher power densities, sustained uninterrupted operation for training runs, and greater resilience against disruptions. Traditional data center planning often assumed more distributed loads and greater tolerance for brief interruptions. Developing a sound AI energy strategy must address these unique characteristics while still meeting conventional requirements for reliability and cost management.

How long does grid interconnection typically take for AI-scale data centers? Interconnection timelines vary significantly by region and grid conditions, but five years or more has become common for large projects in constrained markets. Some operators face even longer delays when significant grid infrastructure upgrades are required. This reality is driving increased interest in behind-the-meter generation and co-location arrangements that can bypass conventional interconnection queues.

What role do renewable energy sources play in powering AI infrastructure? Renewable energy enables faster deployment by bypassing some grid constraints, supports sustainability commitments that hyperscalers increasingly require, and provides long-term cost predictability through power purchase agreements. Leading operators integrate renewables as a core component of their overall strategy rather than treating them as an optional add-on.

How do energy campus developments differ from traditional data center sites? Energy campuses plan power, water, connectivity, and scalability as integrated systems from the outset, rather than addressing each element independently. This approach enables more efficient infrastructure utilization, smoother scaling as demand grows, and better coordination between facility operations and energy management.

Chart Your Course to AI Infrastructure Success

The organizations capturing AI computing capacity are the ones that have recognized power as the foundational constraint and built their deployment strategies accordingly. Site selection, grid interconnection, energy campus development, and resiliency planning all contribute to outcomes that either enable rapid scaling or create delays measured in years.

For hyperscalers and enterprises seeking to deploy AI infrastructure without the timelines and costs that plague conventional approaches, partnering with experienced infrastructure developers offers a path forward. Hanwha Data Centers brings deep expertise in powered land development, renewable energy integration, and rapid deployment to organizations ready to move at the speed AI demands. Connect with Hanwha Data Centers to explore how the right infrastructure partner can accelerate your AI ambitions.