Key Takeaways

Power constraints have replaced compute as the primary bottleneck for AI deployment, making an enterprise energy campus strategy essential to any serious infrastructure investment.

- McKinsey projects global data center capacity demand could reach 219 GW by 2030, with roughly 70% driven by AI workloads.

- Grid interconnection delays are forcing CIOs to rethink traditional approaches to energy planning and site selection.

- Energy campuses that co-locate power generation, storage, and utility infrastructure on a single site offer accelerated deployment and greater cost predictability.

- CIO energy planning now requires cross-functional coordination between IT, facilities, procurement, finance, and sustainability teams.

If your AI roadmap doesn’t include a power strategy, it’s incomplete.

Every enterprise CIO has a cloud strategy. Most have an AI strategy. Very few have an energy strategy. That gap is about to cost them. McKinsey projects that companies across the compute value chain will need to invest $5.2 trillion into data centers by 2030 to meet global AI demand alone. But capital alone won’t solve the problem. The real constraint is power: where it comes from, how fast you can get it, and whether your enterprise energy campus strategy can deliver megawatts on a timeline that matches your AI roadmap.

For CIOs evaluating large-scale infrastructure investments in the AI era, the conversation has shifted from “how many GPUs” to “how many megawatts.” The executives who understand the energy infrastructure landscape are making faster, more resilient decisions about where and how to deploy AI at scale.

Why Is an Enterprise Energy Campus Strategy Now a C-Suite Priority?

For decades, power was a background line item. CIOs chose cloud providers, colocation facilities, or on-prem data centers based on compute capacity, latency, and price. Energy was someone else’s problem. AI changed that equation entirely.

According to Bain & Company’s 2025 forecast, global data center capacity demand is expected to reach 163 GW by 2030, roughly double current levels. In the U.S. alone, the pace of data center investment could push electricity demand to 409 terawatt-hours by that same year. The sheer scale of that growth means any CIO energy planning conversation that starts and ends with the cloud provider is fundamentally incomplete.

What makes this urgent is the compounding effect of grid constraints. As CIO.com recently reported, power, cooling, and physical infrastructure have not historically been discussed within CIO organizations as potential constraints, but they should be. Interconnection queues in key U.S. markets now stretch years. Utilities are billing minimum commitments for reserved power whether you use it or not. And building new transmission lines can take a decade or more. The bottleneck to deploying AI at scale isn’t silicon anymore. It’s megawatts.

What Questions Should CIOs Be Asking About Power?

Every CIO evaluating AI infrastructure should be having direct conversations with cloud and colocation partners about regional power constraints, sourcing options, and expansion timelines. These are questions that used to sit exclusively with facilities teams. Now they belong in the IT strategy discussion.

Key areas of inquiry include whether current hosting environments were designed for high-density GPU workloads, what happens when power demand exceeds local grid capacity, and what the provider’s plan is for securing additional energy supply in constrained markets. CIOs who don’t ask these questions risk concentrating workloads in environments that may not scale when they need to most.

What Is an Energy Campus and Why Does It Matter for Data Center Investment?

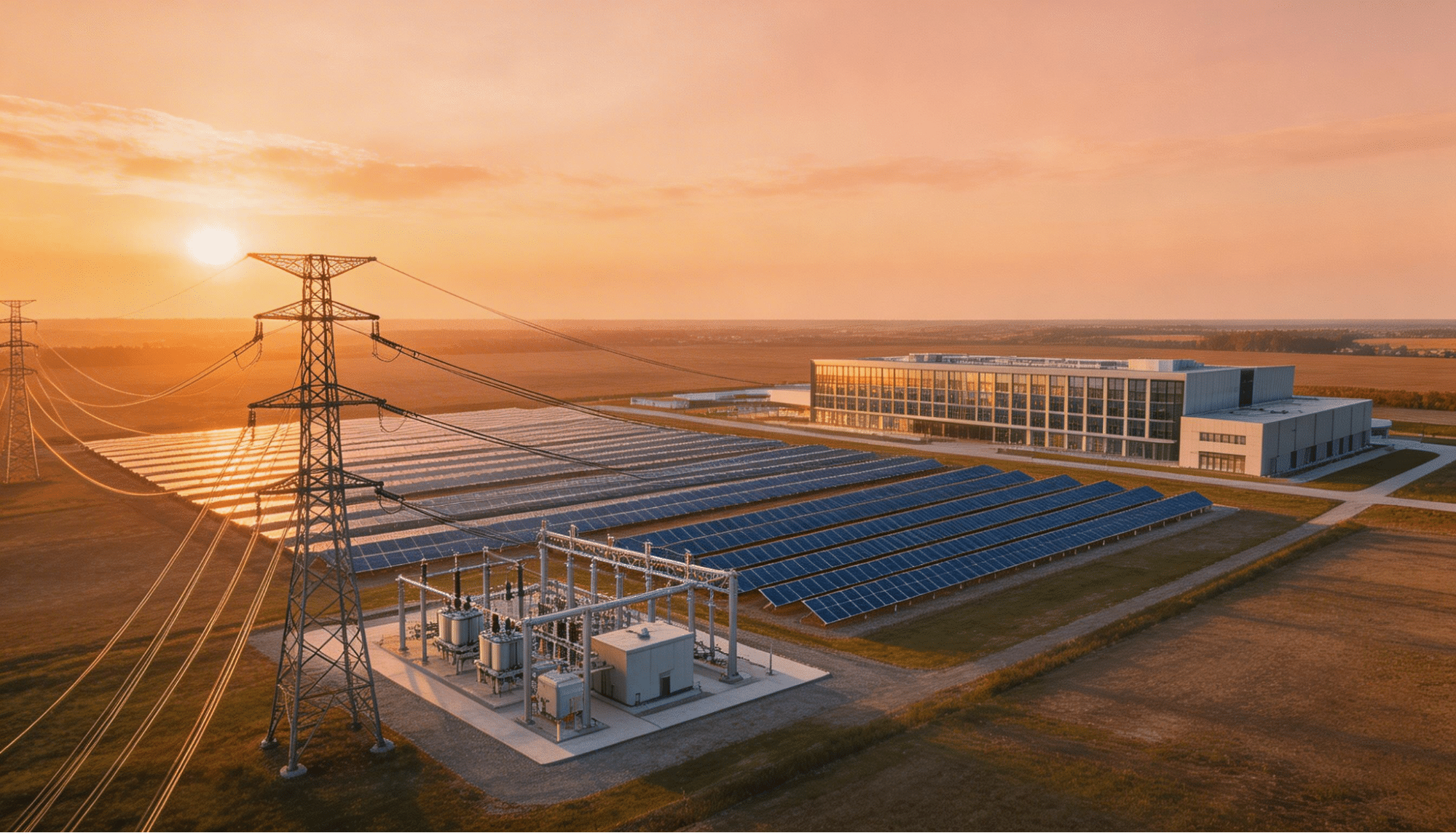

An energy campus is an integrated development site where power generation, energy storage, utility connections, and data center infrastructure are co-located and planned together from day one. Instead of plugging into an oversubscribed grid, a well-executed enterprise energy campus strategy ensures power certainty is built directly into the site.

Think of it this way: traditional data center investment starts with finding a building and then figuring out power. The energy campus model flips that sequence. Power comes first. Land is selected and entitled based on energy availability. Grid interconnections are planned and secured early. On-site generation through solar, battery storage, or other sources provides redundancy and faster time-to-power. Then the data center goes on top of that foundation.

This approach matters because it directly addresses what enterprise leaders care most about: speed and predictability. When power is engineered into the site from the start, you eliminate the multi-year grid queue that stalls even the best-funded projects.

How Does the Energy Campus Model Compare to Traditional Approaches?

The differences between a traditional grid-dependent site and an integrated energy campus become clear when you compare the development timeline, risk profile, and long-term cost structure.

| Factor | Traditional Grid-Dependent Site | Integrated Energy Campus |

| Power Availability | Subject to grid queue (years-long waits) | Engineered into site from inception |

| Deployment Speed | Dependent on utility upgrade timelines | Accelerated through on-site generation |

| Cost Predictability | Exposed to utility rate fluctuations | Greater stability through PPAs and on-site sources |

| Scalability | Constrained by local grid headroom | Designed for phased expansion |

| Risk Profile | Single point of failure at substation | Multiple energy sources and redundancy layers |

Both approaches have their place. Some projects are well-served by traditional grid interconnection, particularly in regions with robust available capacity. The right approach depends on the project’s specific requirements, timeline, and strategic goals. The energy campus model becomes especially compelling when grid constraints are severe and deployment speed is critical.

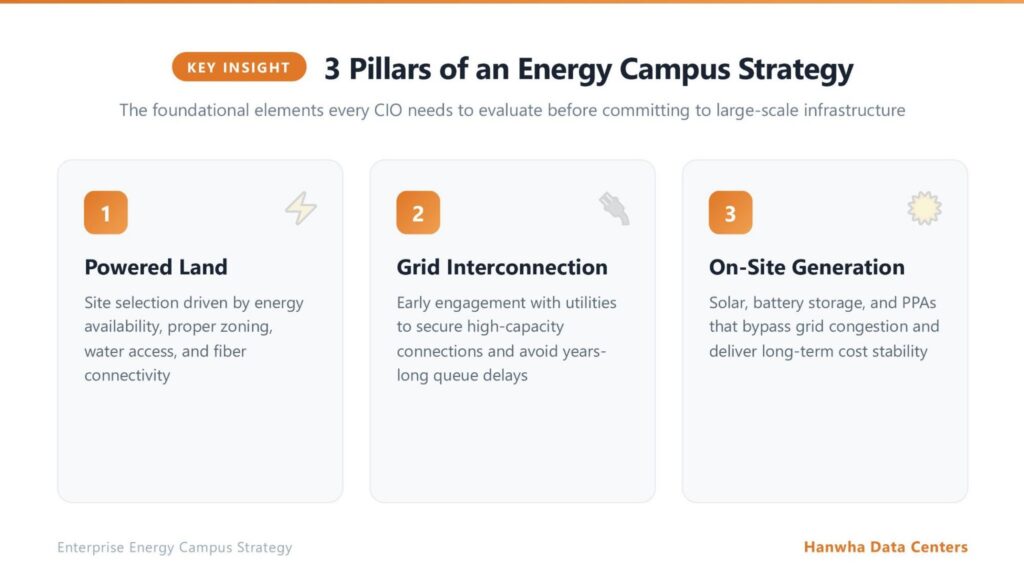

What Does a Strong Enterprise Energy Campus Strategy Include?

Building a viable energy strategy for a campus-scale deployment involves more than choosing a power source. It requires aligning land selection, utility planning, regulatory compliance, and long-term demand forecasting into a cohesive plan.

How Does Site Selection Drive Everything Else?

The foundation of any campus-level energy strategy starts with the land. A viable site requires more than acreage: it needs proper zoning, access to water and wastewater systems, fiber connectivity, and a clear path to power. Powered land development means assessing, entitling, and preparing a site so it can receive and distribute energy at the scale AI workloads demand.

McKinsey estimates that global data center capacity demand could rise 19 to 22% annually through 2030, reaching up to 219 GW. That growth requires enormous amounts of new prepared land with secured power. CIOs who aren’t thinking about the land layer of their infrastructure strategy are leaving a critical variable to chance.

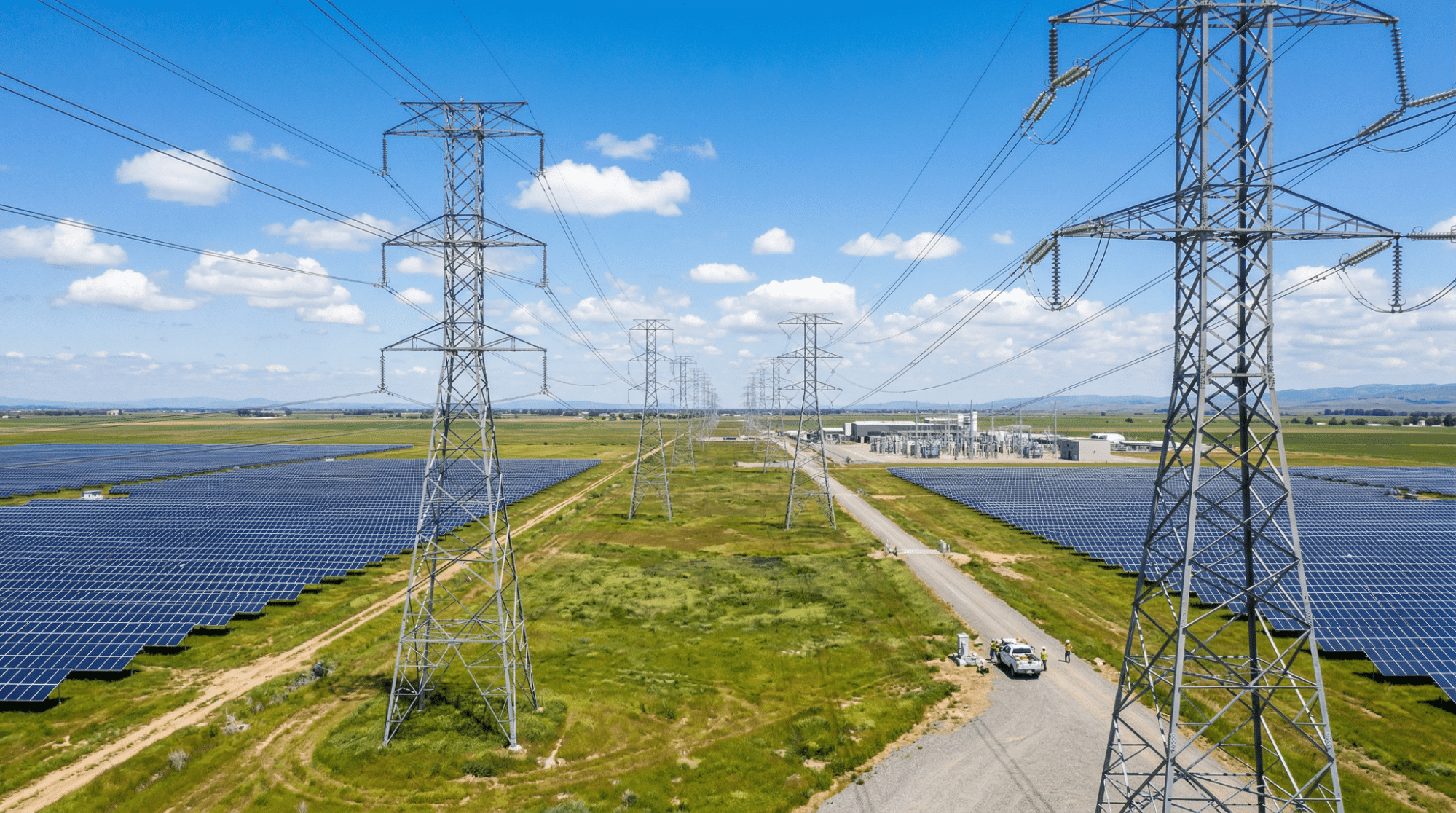

What Role Does Grid Interconnection Play?

Grid interconnection planning is often the most time-consuming piece of the puzzle. Securing a high-capacity connection requires detailed engineering studies, agreements with utility providers, and often significant infrastructure upgrades. Grid interconnection strategies that start early in the site development process can compress the project timeline dramatically.

For enterprises pursuing large-scale AI deployments, the grid connection is the critical path. Projects that wait until the data center design is complete to begin grid planning routinely face delays that derail business timelines and escalate costs.

Where Does Renewable Energy Fit In?

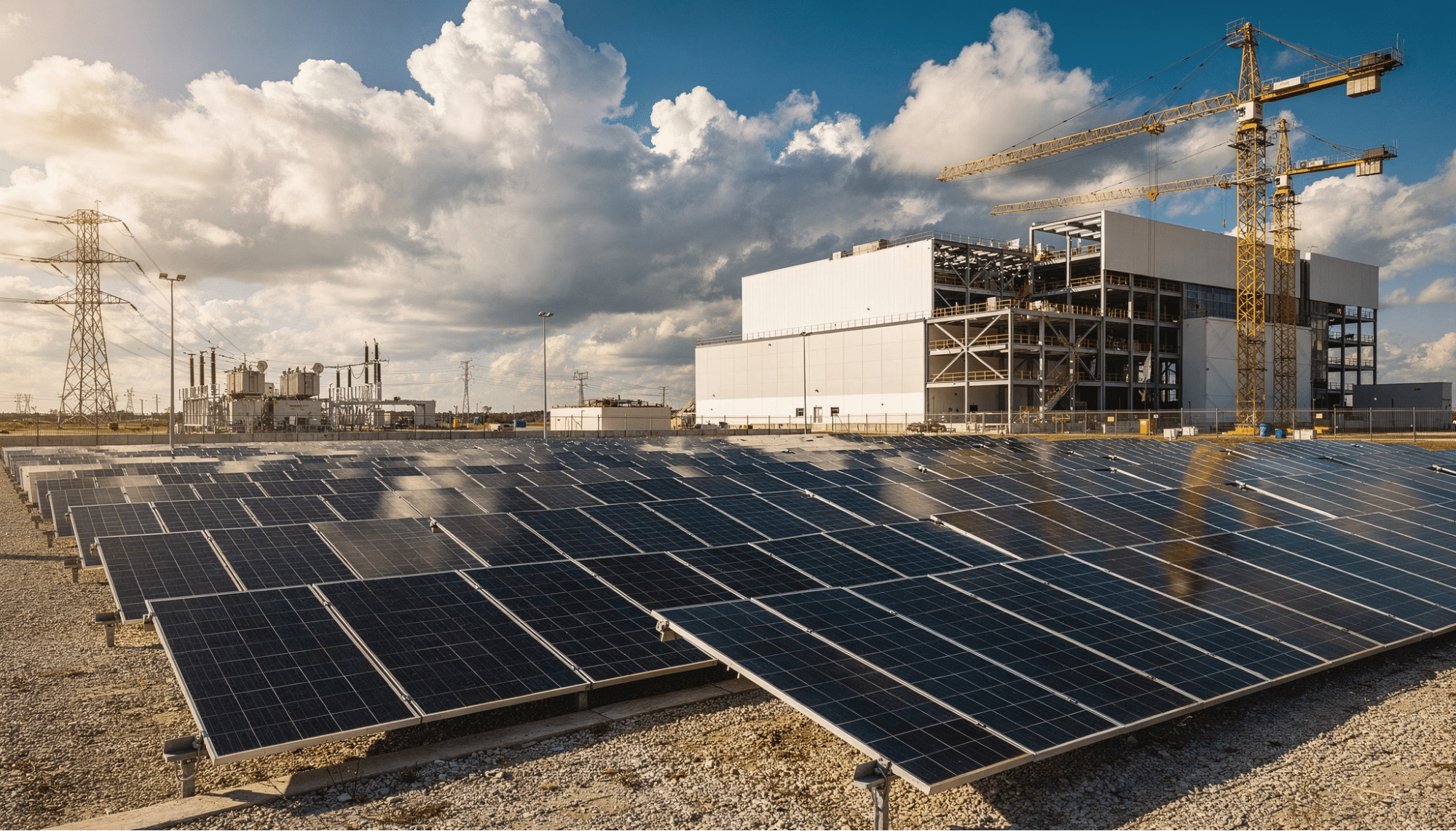

Renewable energy is increasingly a practical enabler of faster deployment rather than a purely environmental play. On-site solar, battery storage, and other renewable energy sources for data centers provide behind-the-meter power that bypasses grid congestion entirely. Paired with power purchase agreements, these solutions also deliver cost stability that traditional utility contracts often cannot.

A BDO industry analysis found that roughly 62% of data center operators are already exploring behind-the-meter renewable solutions, including on-site generation and battery storage systems. This is becoming the default approach for operators who need both speed and resilience.

What Are the Top Priorities for CIO Energy Planning in the AI Era?

Effective energy planning at the enterprise level for large-scale infrastructure isn’t a solo act. It requires pulling in stakeholders from facilities, procurement, finance, sustainability, and executive leadership. Here are the priorities that matter most.

Five Essential Elements of Enterprise-Grade Energy Planning

- Power demand forecasting aligned to AI workload growth. Model three-, five-, and ten-year demand scenarios based on your AI adoption roadmap, accounting for both training and inference workloads.

- Multi-source energy diversification. Relying on a single grid connection creates unacceptable concentration risk. A strong energy strategy includes grid power, on-site generation, battery storage, and contractual mechanisms like PPAs.

- Early-stage grid interconnection engagement. Start utility and grid discussions at the same time as site selection. This single shift in sequencing can eliminate years of delay.

- Cross-functional governance. CIOs should involve facilities, procurement, finance, and sustainability teams in energy decisions that directly impact AI success. Power is now in the critical path of IT strategy.

- Power-first location strategy. Evaluate potential sites through an energy-availability lens first. A site with land and fiber but no clear path to power is a slide-deck project, not a real one.

How Energy Planning Maps to Business Outcomes

When energy planning is embedded into IT strategy, the downstream business impacts are tangible.

| Energy Planning Action | IT/Business Outcome | Risk Mitigated |

| Power demand forecasting | Right-sized infrastructure spend | Overprovisioning or capacity shortfalls |

| Multi-source diversification | Higher uptime and resilience | Single-point-of-failure outages |

| Early grid engagement | Accelerated deployment timelines | Multi-year interconnection delays |

| Cross-functional governance | Aligned capital allocation | Siloed, reactive decisions |

| Power-first site selection | Faster time to operational AI | Stranded assets on unpowered land |

How Are Leading Enterprises Approaching Large-Scale Infrastructure Investment?

The market is signaling clearly where data center investment is headed. According to McKinsey analysis, hyperscalers alone expect to spend $300 billion in capital expenditures during 2025. That spending is overwhelmingly concentrated on sites with secured, scalable power.

What’s Changing About Where Data Centers Get Built?

Historically, data centers clustered in Northern Virginia, Dallas, Phoenix, and a handful of other metros. That’s still happening, but the growth edges are moving. Developers are looking at secondary and tertiary markets where power is more accessible. The deciding factor in these new locations isn’t proximity to a major metro. It’s proximity to power.

This shift has real implications for enterprise CIOs. The best site for your next AI deployment might not be closest to your headquarters or existing cloud region. It might be where a developer has already secured power, prepared the land, and engineered the grid connection.

Why Does Speed to Power Matter Most?

In a market where grid interconnection queues stretch for years, the ability to deliver powered, AI-ready energy infrastructure faster than traditional timelines is the single greatest competitive advantage a site can offer. Every month of delay translates to lost revenue from AI products that can’t launch and workloads that can’t scale.

This is why the energy campus model has gained traction so rapidly. By combining site preparation, power development, and grid planning into a parallel workflow rather than a sequential one, experienced developers can dramatically compress the timeline from land acquisition to operational capacity.

Frequently Asked Questions

What is an energy campus?

An energy campus is a development site where power generation, energy storage, grid interconnection, and data center infrastructure are integrated from the start. Rather than treating energy as a secondary consideration, this model positions power availability as the primary driver of site selection, design, and deployment timelines.

Why are CIOs getting involved in energy planning?

AI workloads have fundamentally changed the economics of enterprise computing. Power and cooling costs are now material to the total cost of AI initiatives, and grid constraints directly impact how fast organizations can scale. Energy availability has become a limiting factor in executing AI strategy, making it an IT leadership concern.

How does an energy campus differ from a traditional data center site?

A traditional site typically depends on existing grid infrastructure, which can mean long waits for interconnection and limited scalability. An energy campus co-locates power generation, storage, and utility infrastructure alongside the data center on a single prepared site, enabling faster deployment, greater resilience, and more predictable long-term costs.

What role does renewable energy play in energy campus development?

Renewable sources like solar and battery storage serve as practical enablers of faster deployment. By generating power on-site, they bypass congested grid queues and provide behind-the-meter energy that reduces reliance on oversubscribed utility infrastructure. They also offer long-term cost stability through mechanisms like power purchase agreements

Ready to Build Your Enterprise Energy Campus Strategy?

The CIOs who will lead in the AI era are the ones who recognized early that power is the foundation everything else depends on. A comprehensive enterprise energy campus strategy that addresses site selection, grid interconnection, on-site generation, and long-term demand planning is the prerequisite for deploying AI at scale.

Hanwha Data Centers develops powered land and integrated energy campuses at the gigawatt scale, helping enterprises and hyperscalers move from planning to operational AI capacity faster than traditional approaches allow. To explore how an energy-first approach can accelerate your infrastructure roadmap, connect with the Hanwha Data Centers team.