Key Takeaways

AI compute demand is forcing a fundamental rethinking of how data center energy infrastructure gets built, sourced, and delivered.

- Grid interconnection bottlenecks are pushing operators toward on-site generation, energy storage, and hybrid power models.

- Renewable energy paired with natural gas and battery storage is emerging as the fastest path to powering AI workloads at scale.

- Powered land development and site-level energy planning are becoming the critical first step in any new data center project.

- Organizations that control their energy supply chain will have a decisive speed advantage over those waiting in utility queues.

If your AI growth strategy does not start with an energy strategy, it is already behind.

The race to deploy AI at scale has exposed a reality that most enterprise leaders did not anticipate: the biggest constraint is not compute, talent, or capital. It is power. AI energy infrastructure has become the central bottleneck shaping where, when, and how fast organizations can bring new data center capacity online. According to a recent analysis from the International Energy Agency, global data center electricity demand is projected to more than double by 2030, reaching over 1,000 TWh, with AI workloads driving the majority of that growth. This is a structural shift, and it requires new approaches to every stage of energy planning, from site selection to grid interconnection to on-site power generation.

This blog explores the emerging power models that are redefining data center energy strategy: on-site generation, battery storage, load balancing, and the integrated energy campus approach that is rapidly gaining traction across the industry.

What Is Driving the AI Energy Infrastructure Crisis?

The demand surge is staggering. U.S. data center grid power demand is expected to rise by 22% in 2025 alone and nearly triple by 2030, according to S&P Global’s 2025 forecast. But the issue is not raw demand in isolation. The grid was designed for predictable, incremental load growth. AI data centers require massive, concentrated power draws that arrive in clusters, and they need it on compressed timelines that the existing utility infrastructure simply cannot match.

Why Are AI Workloads So Power-Hungry?

Power-hungry AI models require specialized hardware, particularly GPUs and custom accelerators, that consume far more electricity per rack than traditional servers. Industry estimates suggest a single large AI training cluster can draw tens of megawatts of sustained power. Inference workloads are growing even faster as enterprises deploy AI applications across customer-facing products. The IEA projects that electricity consumption in accelerated servers will grow by 30% annually through the end of the decade, making AI compute power the dominant driver of new data center energy demand.

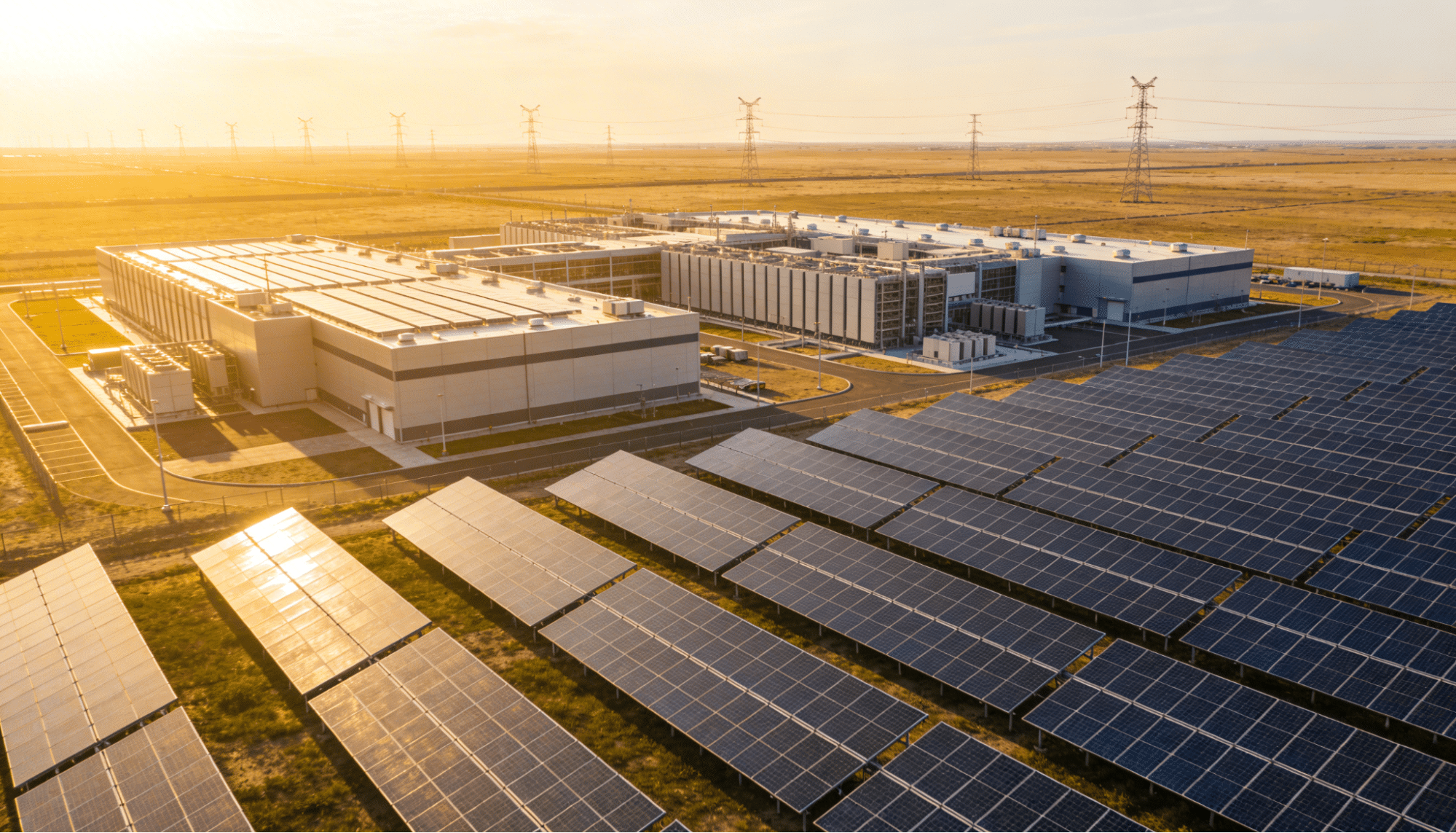

Where Is the Grid Falling Short?

Interconnection queues have become the primary bottleneck. In many U.S. markets, the wait to connect a new large load to the grid stretches beyond five years. Utilities must upgrade transmission lines, substations, and distribution infrastructure before they can deliver the multi-hundred-megawatt loads that AI campuses require. Even when a site has available land and fiber, the absence of reliable data center power keeps it from going operational. This mismatch between demand timelines (months) and grid upgrade timelines (years) is the core problem facing every hyperscaler and colocation operator today.

AI Energy Infrastructure: Key Demand Drivers

| Factor | Impact | Timeline |

| GPU/accelerator adoption | 30% annual growth in server electricity | Through 2030 |

| Hyperscaler CapEx surge | $200B+ annually in data center buildout | 2025-2027 |

| Grid interconnection delays | 5+ year queues in major markets | Ongoing |

| State regulatory shifts | New tariffs, minimum usage requirements | 2025-2026 |

| Enterprise AI scaling | Shift from pilots to production workloads | Accelerating |

Sources: IEA Energy and AI Report; S&P Global

How Is On-Site Power Generation Changing AI Energy Infrastructure?

On-site power generation has shifted from a backup contingency to a core strategy. Industry analysts estimate that roughly 30% of all planned U.S. data center capacity now intends to power operations with behind-the-meter resources. The logic is straightforward: if the grid cannot deliver data center power fast enough, operators will generate it themselves.

What On-Site Generation Options Are Gaining Traction?

Natural gas turbines are currently the most widely deployed solution for rapid on-site power delivery. These units can be installed and operational far faster than waiting for utility grid upgrades. In parallel, operators are exploring renewable energy sources for data centers, including co-located solar arrays and fuel cell deployments that provide clean baseload power. The trend is clear: data centers are evolving from passive energy consumers into active energy producers and planners. This transition is creating an entirely new category of AI energy infrastructure where the power source is integrated directly into the campus design from day one.

Why Does Speed Matter More Than Cost?

In a market where AI leadership depends on getting compute capacity online fast, time-to-power has become the decisive competitive metric. Every month a data center sits waiting for a grid connection is a month of lost revenue, missed training runs, and delayed product launches. On-site generation compresses the timeline dramatically. When paired with strategic site selection and powered land development, operators can achieve operational status at accelerated timelines that grid-dependent approaches simply cannot match.

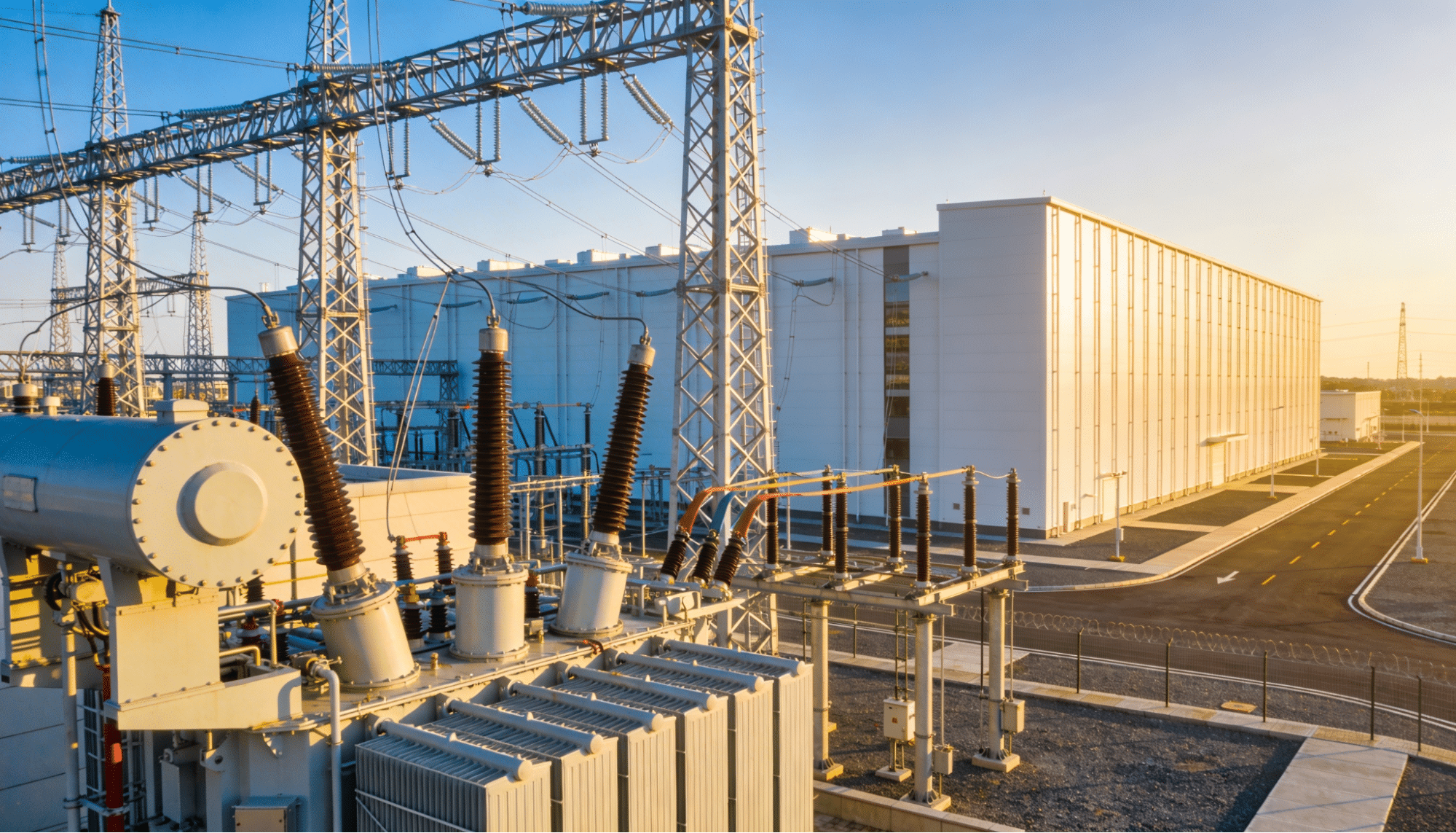

What Role Does Energy Storage Play in Data Center Power?

Battery energy storage systems (BESS) have moved from the periphery to the center of data center energy planning. Storage solves several problems simultaneously: it smooths peak demand to reduce grid strain, provides backup during generation transitions, enables deeper integration of intermittent renewable sources like solar, and supports load balancing across multiple power inputs.

How Are Batteries Becoming Core Infrastructure?

The shift is operational, not theoretical. Grid-scale battery deployments at data center campuses are growing rapidly. Storage allows operators to absorb solar generation during peak production hours and dispatch it during evening demand spikes. It also creates a buffer that lets facilities ride through momentary grid instability without interrupting AI workloads. For operators managing energy for AI at gigawatt scale, storage is the technology that makes hybrid power architectures viable and reliable.

What Does the Integrated Energy Campus Model Look Like?

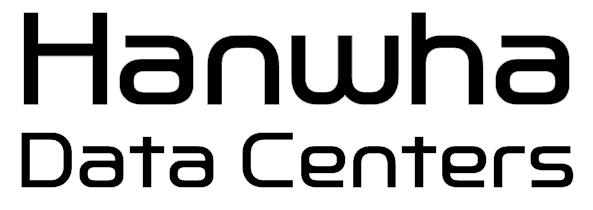

The most forward-thinking approach to powering AI at scale is the integrated energy campus: a purpose-built development that co-locates power generation, energy storage, utility connections, and data center-ready land within a single site. Rather than treating energy as something you procure after construction, the energy campus model starts with power as the foundational layer.

What Makes This Approach Different?

Traditional data center development follows a sequential process: acquire land, design the facility, then figure out power. The energy campus flips this sequence. Energy planning, land entitlement, utility coordination, and renewable integration happen simultaneously. The result is a site that arrives pre-equipped with the power capacity and grid connections that AI workloads demand. This model is gaining adoption because it directly addresses the two biggest challenges in the market: speed to deployment and certainty of power supply.

5 Emerging Power Models Reshaping AI Energy Infrastructure

The energy strategies powering the next generation of AI data centers are diverse and rapidly evolving. Here are the five models that matter most right now.

1. Behind-the-Meter Natural Gas Generation

Gas turbines installed directly at the data center campus bypass the grid interconnection queue entirely. This model offers the fastest path to operational power and is currently deployed across many of the largest new AI builds in Texas and other energy-rich states. When combined with emissions offsets or paired with renewable generation, it provides a practical bridge while longer-term clean energy sources mature.

2. Solar-Plus-Storage Hybrid Systems

Co-located solar arrays paired with battery storage deliver clean energy with built-in reliability. Solar-plus-storage can be deployed rapidly, especially in regions with high irradiance, and provides predictable long-term energy costs through power purchase agreements.

3. Grid Interconnection With On-Site Backup

Where grid capacity exists, traditional interconnection remains attractive. The difference now is that nearly every new project layers on-site backup generation and storage to hedge against the reality of constrained grids and evolving regulatory requirements.

4. Fuel Cell Deployments

Fuel cells running on natural gas or green hydrogen are attracting significant investment as a clean, reliable baseload source. They generate power electrochemically, producing significantly fewer emissions than combustion-based generation, and they run continuously without the intermittency challenges of wind or solar.

5. Integrated Energy Campus Development

The most comprehensive model combines multiple generation sources, storage, and grid connections into a single pre-developed site. This approach eliminates the fragmented procurement process and delivers AI-ready energy infrastructure from the ground up.

Power Model Comparison for AI Data Centers

| Power Model | Scalability | Best For |

| Behind-the-meter gas | High (GW-class) | Rapid AI deployment |

| Solar + storage | High | Long-term cost stability |

| Grid + on-site backup | Moderate | Established markets |

| Fuel cells | Moderate | Clean baseload needs |

| Integrated energy campus | Very high | Hyperscale AI operations |

How Should Enterprises Rethink Their Energy Strategy for AI?

The old playbook of picking a market, signing a utility contract, and building out capacity over several years no longer works for AI workloads. The organizations that are winning the AI infrastructure race share a common trait: they treat energy as the first design decision, not the last procurement task.

This means evaluating potential sites based on power availability before any other factor. It means building relationships with energy developers who can deliver powered land at scale. And it means embracing hybrid power architectures that combine grid connections with on-site generation and storage to create the reliability and redundancy that AI compute power demands.

For operators still relying solely on the grid, the math is simple. Every additional year of interconnection delay is a year of stranded capital and competitive disadvantage. The shift toward building energy for AI around speed, flexibility, and self-sufficiency is not a trend. It is a structural transformation of how data centers get built.

Frequently Asked Questions

What is AI energy infrastructure?

AI energy infrastructure refers to the complete power ecosystem required to support artificial intelligence workloads at data center scale. This includes grid interconnection, on-site generation (such as natural gas turbines, solar, and fuel cells), battery energy storage systems, and the land development and utility planning that enables these systems to deliver reliable data center power.

Why can’t the existing power grid support AI data centers?

The existing grid was designed for slow, predictable load growth. AI data centers require massive, concentrated power in compressed timeframes. Grid interconnection queues in many markets now stretch beyond five years, and utilities must make expensive infrastructure upgrades before delivering multi-hundred-megawatt loads. This mismatch is driving operators toward on-site and hybrid power solutions.

What is an energy campus for data centers?

An energy campus is a purpose-built development that integrates power generation, energy storage, grid connections, and data center-ready land within a single site. Instead of treating energy as a separate procurement step after construction, the energy campus model treats power as the foundational infrastructure layer, enabling faster deployment and greater supply certainty.

How does on-site power generation speed up data center deployment?

On-site generation, particularly natural gas turbines and solar-plus-storage systems, allows data center operators to bypass lengthy grid interconnection queues. By generating power directly at the campus, operators can compress the timeline from site acquisition to full operation, gaining a significant competitive advantage in the race to deliver AI compute power at scale.

Start Building Your AI Energy Infrastructure Today

The convergence of AI demand and power constraints is creating both urgency and opportunity. Organizations that move now to secure powered land, diversify their energy supply, and adopt integrated campus models will be positioned to scale AI workloads faster than competitors still waiting in grid queues. Hanwha Data Centers specializes in delivering the foundational energy infrastructure that makes AI-scale data center development possible, from strategic land acquisition and utility planning to renewable integration and grid interconnection. Connect with the team to explore how a power-first approach can accelerate your next data center project.