Key Takeaways

Redundant data center power has shifted from a best practice to a baseline requirement as AI workloads demand continuous, high-density energy delivery with zero tolerance for interruption.

- Power remains the leading cause of serious data center outages, with more than half of significant incidents costing over $100,000 according to Uptime Institute research.

- By 2030, 27% of data center facilities expect to run entirely on onsite generation, a 27x increase from just 1% reported a year earlier, signaling an industry-wide pivot toward power self-sufficiency.

- AI training workloads synchronize thousands of GPUs simultaneously, meaning a single power disruption can destroy weeks of computational progress and millions in investment.

- Integrated energy campuses that combine grid connections, renewable generation, and battery storage create layered failover energy architectures purpose-built for AI reliability.

Organizations planning AI infrastructure should evaluate energy partners capable of delivering multi-source, redundant power from the ground up rather than retrofitting legacy systems after the fact.

Power availability has become the single greatest bottleneck in AI data center development. According to Bloom Energy’s 2025 Data Center Power Report, 84% of data center decision-makers now rank power availability among their top three site selection priorities, and by 2030, 27% of facilities expect to run entirely on onsite generation. That’s a 27x increase from just one year prior. The message is clear: the grid alone cannot keep pace with AI’s appetite for electricity.

For enterprises deploying GPU clusters that consume 700–1,200 watts per chip across racks exceeding 80 kilowatts each, redundant data center power is no longer a nice-to-have checkbox. It’s the foundation of operational viability. When a single AI training run synchronizes thousands of processors for weeks on end, any interruption can erase millions of dollars in compute progress. The stakes have fundamentally changed, and power infrastructure needs to change with them.

Why Does Redundant Data Center Power Matter More in the AI Era?

Traditional enterprise computing followed predictable patterns: workloads peaked during business hours and dropped overnight. Infrastructure could be sized for average demand with comfortable margins for spikes. AI has rewritten those assumptions entirely. Training large language models and running inference at scale create sustained, high-density power demands that run 24/7 without the cyclical dips operators once relied on for maintenance windows.

What Makes AI Workloads Uniquely Vulnerable to Power Disruption?

GPU clusters training a foundation model must stay synchronized across thousands of processors. If power drops to even a fraction of those chips, the entire training run can fail, requiring a restart from the last checkpoint. Depending on checkpoint frequency and model complexity, that can mean days or weeks of lost compute time. Unlike a web server that reboots in minutes, AI training infrastructure doesn’t recover gracefully from unplanned shutdowns.

The financial exposure is substantial. According to Uptime Institute’s 2025 Annual Outage Analysis, power remains the leading cause of serious data center outages, responsible for 45% of significant incidents. More than half of operators reported that their most recent major outage cost over $100,000, with one in five exceeding $1 million. For AI-intensive operations running multi-million-dollar training jobs, those figures multiply quickly. A single power event at a large AI facility can translate into eight-figure losses when you factor in lost compute, delayed model releases, and downstream revenue impacts.

How Do AI Power Requirements Compare to Traditional Data Centers?

The gap between traditional and AI-driven power requirements illustrates why legacy redundancy approaches fall short. Here’s a snapshot of the differences infrastructure planners face today:

| Characteristic | Traditional Data Center | AI-Intensive Facility |

| Rack Power Density | 10–15 kW per rack | 80–150 kW per rack |

| Workload Pattern | Cyclical peaks and valleys | Sustained 24/7 maximum load |

| Downtime Tolerance | Minutes to hours acceptable | Seconds can destroy training runs |

| Redundancy Standard | N+1 often sufficient | 2N or higher increasingly required |

| Grid Dependency | Primary power source | One layer of a multi-source strategy |

These differences explain why AI facilities require fundamentally different approaches to power architecture. A strategy that worked for 15 kW racks with intermittent workloads simply cannot protect infrastructure running at ten times that density around the clock.

What Does True Data Center Redundancy Look Like for AI?

Data center redundancy is measured using a simple but powerful framework: N represents the minimum power capacity needed to run at full load, and everything above N represents your safety net. For traditional enterprise workloads, N+1 configurations (one additional backup component per system) provided adequate protection. AI operations are pushing the industry toward 2N and beyond, where complete duplicate power systems run in parallel to eliminate any single point of failure.

What Are the Core Components of a Multi-Layered Power Architecture?

Modern redundant power architecture for AI facilities layers multiple systems to create defense in depth. Each component addresses a specific failure scenario, and together they form a comprehensive resilient power infrastructure capable of handling anything from a momentary voltage sag to a sustained regional grid outage.

Uninterruptible power supply (UPS) systems act as the first line of defense, providing instantaneous battery-backed power that bridges the gap between a utility interruption and generator startup. For AI workloads, modern lithium-ion UPS systems offer faster response times and higher power density than traditional lead-acid alternatives. Behind the UPS, backup generators provide extended runtime during prolonged outages. AI facilities increasingly deploy generators in 2N configurations, maintaining two fully independent generator systems each capable of carrying the entire facility load.

Battery energy storage systems (BESS) add another critical layer. Unlike traditional UPS batteries designed for short bridge periods, large-scale BESS installations can sustain operations for extended durations while also smoothing power fluctuations from renewable energy sources. These systems respond to load changes within milliseconds, which is essential for AI workloads that experience rapid power demand shifts as training phases transition.

Why Is Onsite Power Replacing Grid-Only Strategies?

The shift toward onsite power generation represents one of the most significant infrastructure trends in the data center industry’s history. Grid interconnection queues have swelled dramatically, with industry analyses estimating more than 12,000 active projects seeking grid connection across the United States, and utilities in major markets now quote connection timelines that stretch years into the future. For AI operators who need power now, waiting simply isn’t an option.

What Is Driving the Onsite Power Revolution?

The math behind onsite power adoption is straightforward. When grid connections take years and AI infrastructure generates revenue from day one of operation, every month of delay represents enormous opportunity cost. Onsite power generation, whether through solar arrays, battery storage, natural gas, or hybrid configurations, puts operators in control of their own deployment timelines. It also provides a fundamental failover energy advantage: when the grid goes down, onsite generation keeps operations running independently.

According to the 2025 Data Center Power Report mid-year update, 38% of data center facilities expect to use some form of onsite generation for primary power by 2030, up from 13% just one year earlier. Decision-makers are now prioritizing time-to-power and the ability to support fluctuating AI workloads alongside traditional cost and reliability considerations. This isn’t a marginal shift. It’s a fundamental rethinking of how energy infrastructure for AI gets planned and delivered.

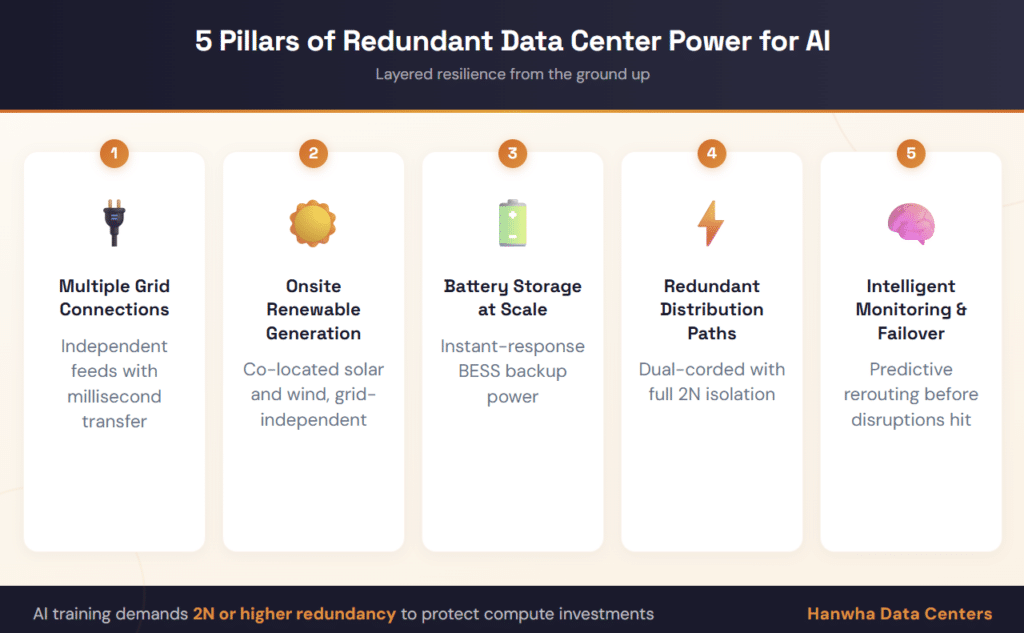

5 Pillars of Redundant Data Center Power for AI Facilities

Building effective AI reliability into power infrastructure requires a layered approach. Here are the five critical elements that define a resilient, AI-ready power architecture:

1. Multiple Independent Grid Connections. Connecting to separate utility substations fed from different transmission sources eliminates single points of failure at the grid level. Automatic transfer systems can switch between connections within milliseconds.

2. Onsite Renewable Generation. Solar arrays and wind installations co-located with data centers provide a generation source that operates independently of grid conditions. When paired with storage, these systems deliver both primary power capacity and failover energy reserves.

3. Battery Energy Storage at Scale. Large-format BESS installations bridge the gap between intermittent generation and continuous demand while providing millisecond-response backup during power transitions. They also smooth voltage and frequency irregularities that can damage sensitive GPU hardware.

4. Redundant Power Distribution Paths. Dual-corded servers and independent power distribution units ensure that no single cable, breaker, or distribution panel failure can interrupt critical AI workloads. 2N distribution provides complete path redundancy.

5. Intelligent Monitoring and Automated Failover. Real-time power monitoring combined with automated switching systems detects anomalies and reroutes power before interruptions reach IT equipment. Predictive analytics can identify potential failures before they occur.

How Does Integrated Energy Campus Design Strengthen Redundancy?

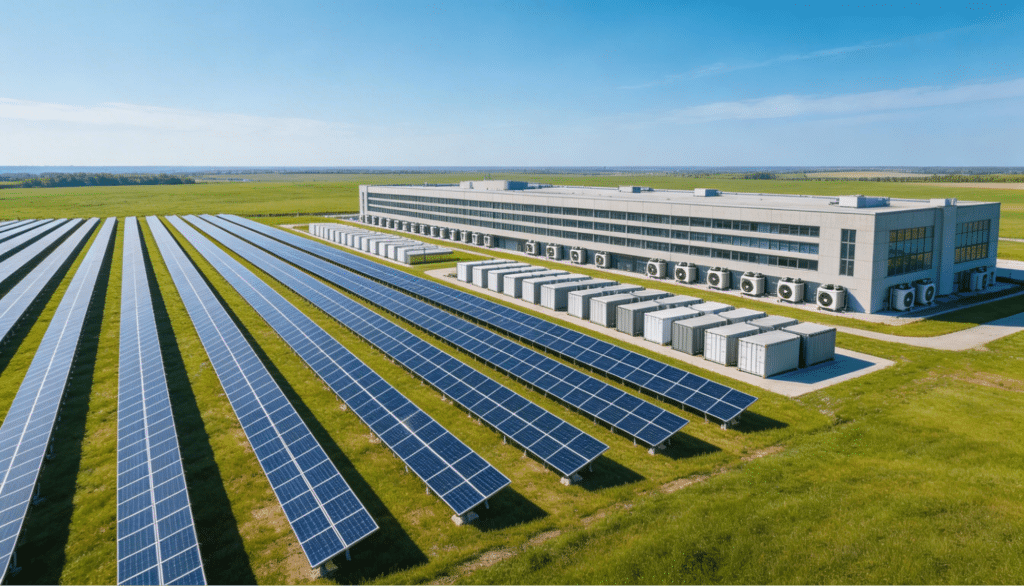

The most effective approach to redundant data center power isn’t bolting backup systems onto existing infrastructure. It’s designing power redundancy into the site from the very first stage of development. That’s why integrated energy campus models are gaining traction across the industry. When land acquisition, utility planning, grid interconnection, and onsite generation are coordinated as a unified development effort, the result is inherently more resilient than piecemeal approaches.

What Advantages Does a Ground-Up Approach Offer?

Site selection based on power availability, renewable energy potential, and grid capacity sets the foundation for everything that follows. A well-chosen site with robust utility access, available land for onsite generation, and favorable interconnection timelines provides natural advantages that no amount of equipment can replicate at a poorly positioned location. It addresses the infrastructure gap before construction ever begins.

This ground-up philosophy extends to how power sources interact. Rather than treating grid power, renewables, and battery storage as separate systems with separate management, integrated campuses orchestrate all sources through unified control platforms. These platforms dynamically balance load across available sources, prioritize the most cost-effective generation at any given moment, and automatically shift to backup sources if any primary source degrades. The result is a multi-layered failover energy architecture that’s more resilient and more efficient than any single approach.

What Should AI Operators Consider When Choosing Redundancy Levels?

Choosing the right level of data center redundancy requires balancing uptime requirements against infrastructure investment. Here’s how the standard tier classifications map to AI workload suitability:

| Redundancy Level | Uptime Target | Power Architecture | AI Suitability |

| N+1 | 99.98% | Single path with backup components | Suitable for inference-only workloads with checkpoint recovery |

| 2N | 99.99% | Fully mirrored systems on independent paths | Recommended minimum for AI training operations |

| 2N+1 | 99.999% | Mirrored systems plus additional standby | Ideal for mission-critical, continuous AI training |

| Multi-Source Campus | 99.999%+ | Grid + onsite generation + storage + renewables | Purpose-built for hyperscale AI with zero downtime tolerance |

The trend is unmistakable. As AI workloads grow in scale and economic importance, the redundancy floor keeps rising. What qualified as enterprise-grade five years ago now falls short of minimum requirements for serious AI deployments.

Frequently Asked Questions

What does power redundancy mean in a data center?

Power redundancy in a data center refers to the practice of deploying duplicate or backup power systems, including UPS units, generators, battery storage, and multiple grid connections, so that operations continue seamlessly if any single component or power source fails. Redundancy configurations range from N+1 (one backup per component) to 2N (complete system duplication) and beyond.

Why is onsite power generation important for AI data centers?

Onsite power generation reduces dependence on congested utility grids, accelerates deployment timelines, and provides an independent failover energy source. For AI operations that cannot tolerate interruptions, onsite generation adds a critical redundancy layer that grid-only strategies cannot match, especially as interconnection queues stretch years into the future.

How does data center redundancy differ for AI versus traditional workloads?

Traditional workloads often tolerate brief interruptions and can recover from standard backups. AI training workloads synchronize thousands of GPUs simultaneously, so even seconds of downtime can corrupt entire training runs worth millions of dollars. This demands higher redundancy levels, faster failover times, and more sophisticated power quality management than traditional enterprise computing requires.

What is the difference between N+1 and 2N redundancy?

N+1 provides one additional backup component for each critical system, protecting against a single component failure. 2N fully duplicates the entire power infrastructure with two independent systems, each capable of carrying the full facility load. For AI data centers with zero downtime tolerance, 2N or higher configurations are increasingly becoming the standard.

Build Your AI Infrastructure on a Foundation That Won’t Fail

Redundant data center power is the difference between an AI operation that delivers consistent results and one that’s perpetually recovering from the last disruption. As workloads intensify, rack densities climb, and grid constraints tighten, the organizations that invest in layered, multi-source power infrastructure will be the ones positioned to lead.

The playbook is evolving: from single-source grid reliance to integrated energy campuses where redundancy is engineered from the ground up. Site selection, power procurement, onsite generation, and storage all need to work as a coordinated system rather than disconnected afterthoughts.

Hanwha Data Centers specializes in developing the foundational energy infrastructure that makes AI-ready, resilient data centers possible. From powered land development and grid interconnection to renewable integration and scalable energy campus design, their team builds the power foundation your AI operations demand. Connect with Hanwha Data Centers to start planning your redundant power strategy today.