Key Takeaways

AI-ready digital infrastructure is the foundational energy and site infrastructure that enables data centers to support massive AI computational demands.

- Power constraints are now the primary bottleneck – grid interconnection timelines and power delivery capacity limit AI deployment more than computational resources or algorithms

- Investment has reached unprecedented scale – global data center power consumption is projected to reach 945 TWh by 2030, requiring massive infrastructure investments in power generation and delivery

- Infrastructure readiness gaps create competitive disadvantage – organizations struggle to secure adequate power capacity, with traditional data center approaches unable to meet AI’s continuous high-density demands

- Strategic energy campus partnerships are essential – organizations that secure comprehensive infrastructure solutions integrating site preparation, power delivery, and renewable generation will determine who leads in the AI economy

The artificial intelligence revolution has created an infrastructure crisis that most enterprises never saw coming. While organizations rushed to experiment with generative AI and deploy large language models, a more fundamental challenge emerged: the physical infrastructure supporting these systems simply wasn’t built for AI’s unprecedented power demands.

According to the International Energy Agency, global electricity consumption for data centers is projected to double by 2030, reaching approximately 945 terawatt-hours. This explosive growth has made AI-ready digital infrastructure the defining constraint for organizations seeking competitive advantage through artificial intelligence.

What Makes Digital Infrastructure “AI-Ready”?

AI-ready digital infrastructure represents a fundamental departure from traditional data center capabilities. It encompasses the complete physical foundation required to support AI workloads, including power generation and delivery systems, site preparation, grid interconnection, renewable energy integration, and energy storage solutions.

The distinction matters because AI operations consume dramatically different resources than conventional computing. Where traditional enterprise applications might peak and drop throughout the day, AI training sessions and inference operations run continuously, demanding sustained high-power consumption that challenges existing grid infrastructure and conventional facility design.

Modern AI workloads require dramatically higher power densities than traditional computing. AI racks demand several times more sustained power than conventional enterprise servers, representing an increase in power consumption that extends far beyond simple scaling. This fundamental shift requires entirely new approaches to site selection, power delivery, and energy infrastructure planning focused on gigawatt-scale capacity.

The Core Components of AI-Ready Infrastructure

Power Generation and Delivery Systems

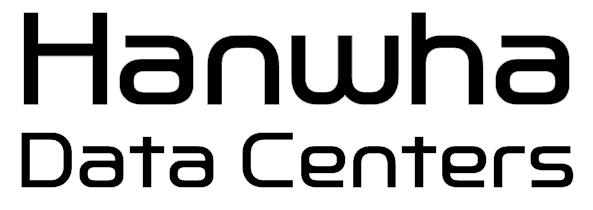

The foundation begins with adequate power generation capacity. Traditional grid connections often cannot meet the massive, continuous power demands of AI operations. Digital infrastructure providers are now developing dedicated generation assets, including large-scale solar installations, wind farms, and energy storage systems designed specifically for data center loads. Modern AI facilities require multiple redundant power sources since a single training session might run for weeks, and any interruption can result in significant losses.

Strategic Site Selection and Preparation

Location determines success in AI infrastructure development. Viable sites offer proximity to existing transmission infrastructure, access to renewable energy resources, and supportive local regulatory environments. Site preparation includes environmental assessments, zoning coordination, utility infrastructure development, and engineering aligned with high-density AI operations.

Grid Interconnection Infrastructure

Grid interconnection has emerged as the primary bottleneck constraining development. Goldman Sachs Research indicates connection timelines often extend 4-8 years, with utilities struggling to upgrade transmission capacity due to permitting delays and capital constraints. This has made behind-the-meter power solutions and direct renewable generation increasingly attractive.

Renewable Energy Integration

Sustainability has evolved from environmental preference to business imperative. Renewable energy for AI data centers requires sophisticated integration of multiple generation sources to ensure 24/7 reliability. Solar production peaks during daylight, wind resources strengthen at night, and energy storage bridges gaps. Effective solutions combine these elements with strategic geographic distribution to minimize intermittency.

Why AI Infrastructure Requirements Differ From Traditional Data Centers

The computational demands of artificial intelligence have fundamentally transformed data center energy requirements. Understanding these differences helps explain why traditional infrastructure approaches fail to meet AI’s unique needs and why specialized solutions have become essential.

Continuous High-Power Operations

AI workloads exhibit dramatically different operational patterns than conventional computing. Enterprise modernization initiatives typically involve applications that scale up and down based on business hours and user demand. Email servers, databases, and web applications experience predictable daily cycles with clear peak and valley periods.

AI operations, particularly model training and real-time inference, maintain sustained high power consumption around the clock. Training large language models requires thousands of graphics processing units operating simultaneously for extended periods, often weeks or months without interruption. This creates power demands that must be sustained continuously, unlike traditional computing that experiences significant load variations throughout the day.

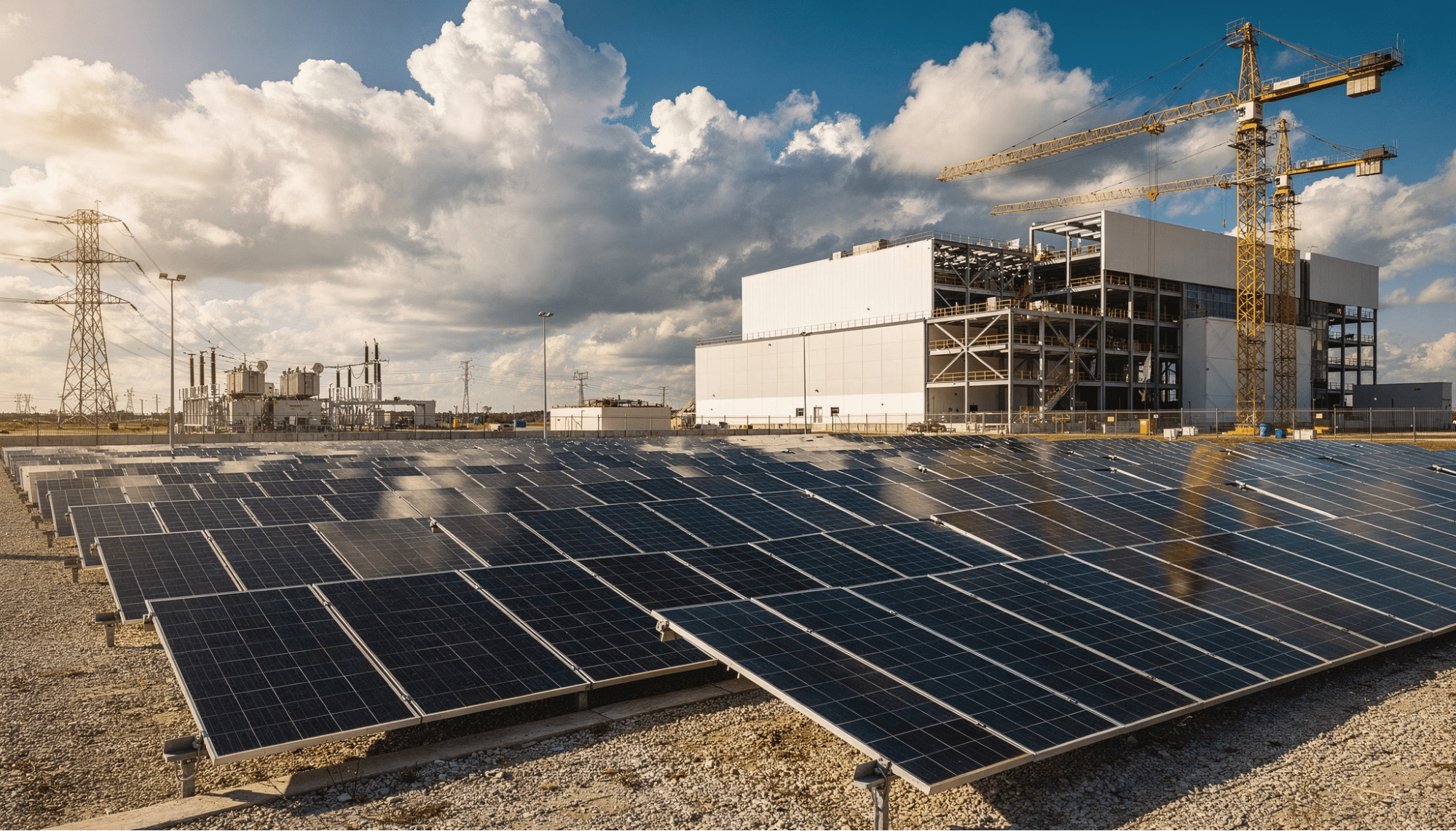

| Infrastructure Characteristic | Traditional Data Centers | AI-Ready Data Centers |

| Power Density Per Rack | Lower density | 5-10x higher density |

| Operational Pattern | Variable, cyclical | Continuous, sustained |

| Grid Connection Timeline | Shorter approval cycles | Multi-year approval processes |

| Renewable Integration | Optional | Essential |

| Power Usage Growth | Gradual annual increases | 160% increase by 2030 |

Extreme Power Density Challenges

The shift from CPU-based computing to GPU-intensive AI processing has created power density requirements that challenge every aspect of facility design. Modern AI processors consume significantly more power than traditional server CPUs, and when deployed in dense rack configurations, power consumption reaches levels that strain existing electrical infrastructure.

This concentration of power creates cascading effects throughout the entire energy campus. Electrical distribution systems must handle higher voltages and currents, backup power systems require greater capacity, and facilities must be engineered from the ground up to support these extreme loads. Traditional data center retrofits cannot accommodate these requirements, making purpose-built infrastructure with dedicated power generation and delivery systems essential for AI operations.

Geographic Concentration and Competition

Data center capacity in the United States has historically concentrated in just a few regional areas, creating intense pressure on local electrical grids and transmission infrastructure. This concentration reflects development patterns around connectivity hubs and enterprise demand, but it creates significant challenges for AI infrastructure expansion.

The result is fierce competition for scarce resources. Multiple organizations often propose similar large-scale projects to the same utilities, seeking the quickest access to power. Meeting growing demand requires expanding beyond traditional data center markets to locations where land and power are more readily available. This geographic flexibility has become a competitive advantage, with successful infrastructure developers securing sites that offer both transmission access and renewable energy potential.

How Can Enterprises Assess Their AI Infrastructure Readiness?

Organizations must evaluate current infrastructure capabilities against specific AI deployment demands. This assessment determines whether existing resources can support AI initiatives or whether new infrastructure partnerships are required.

Power Capacity and Scalability Evaluation

Quantify current power consumption and available capacity. Traditional facilities typically operate with 20-30% reserve capacity, but AI workloads quickly consume this buffer. Calculate power requirements for planned deployments, factoring computational loads and supporting infrastructure. Many organizations discover that greenfield development or partnerships with specialized IT infrastructure for AI providers offers more cost-effective paths than retrofitting legacy facilities.

Grid Connection and Energy Source Analysis

Examine current power procurement and grid interconnection capacity. New connections face multi-year approval processes, requiring immediate action for organizations planning significant AI deployments. Evaluate opportunities for renewable energy integration and behind-the-meter generation. Direct power purchase agreements can provide cost stability and accelerate deployment compared to traditional grid connections.

Timeline and Geographic Flexibility Assessment

AI-ready digital infrastructure development requires significant lead time for site identification, land acquisition, utility coordination, and grid interconnection approvals. Organizations with aggressive timelines must secure existing capacity or consider interim solutions. Geographic flexibility significantly accelerates deployment, as markets with available power capacity, suitable land, and existing transmission infrastructure enable faster execution than congested metropolitan areas. Strategic site selection becomes a core competitive advantage in securing AI infrastructure.

What Are the Long-Term Implications of Infrastructure Constraints?

Infrastructure challenges carry profound implications for competitive positioning and market dynamics that extend far beyond technical hurdles.

Competitive Advantage Through Infrastructure Access

Organizations securing adequate infrastructure today gain first-mover advantages that compound over time. AI capabilities enable improved customer experiences, operational efficiencies, and innovations that create widening gaps between leaders and followers. McKinsey analysis projects capital expenditures on data center infrastructure will exceed $1.7 trillion globally by 2030, reflecting the strategic importance enterprises place on securing comprehensive infrastructure solutions.

The most successful organizations recognize that AI infrastructure extends beyond computing hardware to encompass the complete energy campus: strategic land positions, dedicated power generation, grid interconnection infrastructure, and integrated renewable energy systems. This holistic approach to infrastructure development determines which organizations can scale AI capabilities and which face constraints regardless of their technological expertise.

Market Consolidation and Partnership Models

Infrastructure constraints are driving new partnership models between hyperscale providers, specialized infrastructure developers, and enterprise customers. Traditional build-own-operate models give way to flexible arrangements that distribute capital requirements and accelerate deployment. Organizations must evaluate whether building proprietary infrastructure makes strategic sense or whether partnerships with established digital infrastructure providers offers better risk-adjusted returns.

5 Critical Questions Every AI Leader Should Ask About Infrastructure

1. How Will We Secure Adequate Power for Planned AI Deployments?

Power availability determines AI strategy feasibility. Leaders must understand capacity constraints, evaluate grid connection options, and explore alternative power sources including dedicated renewable generation. Organizations planning significant expansion should begin infrastructure discussions 24-36 months before anticipated deployment.

2. What Geographic Flexibility Do We Have for AI Infrastructure?

Willingness to consider diverse locations dramatically expands options. Markets outside traditional hubs often offer faster grid connections, lower power costs, and more favorable development conditions. Assess whether AI workloads can be geographically distributed to capitalize on these opportunities.

3. Should We Build, Partner, or Pursue a Hybrid Approach?

Each infrastructure model carries distinct tradeoffs. Building proprietary facilities maximizes control but requires substantial capital and expertise. Partnerships accelerate deployment and reduce risk but may limit customization. Most organizations find hybrid approaches deliver optimal outcomes combining owned capacity for core operations with partnerships for growth.

4. How Do We Balance Cost, Sustainability, and Reliability?

AI infrastructure decisions involve complex tradeoffs. Renewable energy integration may increase upfront investment but provides long-term cost stability and meets environmental objectives. Leaders must evaluate these factors through comprehensive frameworks considering both financial and strategic implications.

5. What Talent and Expertise Do We Need to Manage AI Infrastructure?

Operating AI-ready digital infrastructure requires specialized expertise many organizations lack. From power engineering to renewable energy procurement to facility operations, skill requirements differ substantially from traditional IT infrastructure management. Organizations must assess whether to build expertise, partner with specialized providers, or pursue hybrid models.

Building the Foundation for AI Success

The AI infrastructure challenge represents one of the most significant strategic decisions organizations will make over the next decade. Companies that secure comprehensive energy campus solutions position themselves to capitalize on AI opportunities, while those that delay infrastructure decisions find themselves constrained regardless of their technological capabilities.

The magnitude of investment required reflects the transformational nature of artificial intelligence. Hyperscalers are collectively investing hundreds of billions of dollars annually, recognizing that infrastructure access will determine competitive positioning in the AI economy. Enterprise organizations face similar imperatives at different scales, needing to secure foundational infrastructure that supports both current initiatives and future expansion.

Success requires moving beyond traditional approaches to infrastructure development. The power densities, operational patterns, and scale requirements of AI workloads demand purpose-built energy campuses that integrate renewable generation, strategic land acquisition, sophisticated power delivery systems, and grid interconnection expertise from the ground up. Organizations must evaluate whether their current infrastructure can support AI ambitions or whether partnerships with specialized infrastructure developers offer faster, more cost-effective paths forward.

The window for securing favorable infrastructure positions is narrowing as competition for resources intensifies. Grid interconnection timelines extend as more organizations pursue similar projects, power capacity in key markets becomes increasingly scarce, suitable sites with transmission access grow harder to find, and development costs rise with demand. Organizations that begin comprehensive infrastructure planning now gain advantages that compound over time.

Frequently Asked Questions

What is the primary difference between AI-ready and traditional data center infrastructure?

AI-ready digital infrastructure delivers significantly higher power densities, supports continuous 24/7 operations rather than variable workloads, and integrates renewable energy sources to ensure sustainable, reliable power delivery at massive scale. Most importantly, it encompasses the complete energy campus including land preparation, power generation, and grid interconnection rather than just facility space.

How long does it take to develop new AI infrastructure from scratch?

Development timelines vary significantly based on site characteristics and grid interconnection approval processes. Grid connection approvals often extend multiple years in many markets. Sites with existing transmission infrastructure, suitable land positions, and renewable energy access can accelerate development considerably, making strategic site selection critical.

Why is renewable energy integration essential for AI data centers?

Renewable energy provides long-term cost stability that traditional grid power cannot match, meets increasingly stringent corporate sustainability commitments, and enables behind-the-meter generation that bypasses grid interconnection bottlenecks that constrain traditional development approaches. Energy campuses integrating solar, wind, and storage systems offer the most reliable path to securing gigawatt-scale power capacity.

What are the biggest bottlenecks facing AI infrastructure development?

Grid interconnection approval processes and transmission capacity represent the primary constraints. Power generation capacity, site availability near existing infrastructure, land acquisition in suitable locations, and specialized talent for energy campus operations also create significant challenges for scaling AI infrastructure. Organizations need comprehensive solutions that address all these elements simultaneously.

How much will AI infrastructure investment total by 2030?

Global capital expenditures on data center infrastructure are projected to exceed $1.7 trillion by 2030. These figures reflect the strategic importance of securing adequate foundational infrastructure including land, power generation, grid connectivity, and renewable energy systems to support AI capabilities and competitive positioning.

Partner With Infrastructure Experts Who Understand AI’s Unique Demands

The complexity of AI infrastructure development makes specialized expertise essential. Successfully deploying gigawatt-scale energy campuses requires deep knowledge across site acquisition, land preparation, power infrastructure engineering, grid interconnection navigation, renewable energy integration, and long-term capacity planning.

Hanwha Data Centers brings comprehensive capabilities in energy campus development, delivering the foundational infrastructure enterprises need to support demanding AI workloads. Our integrated approach addresses the complete infrastructure stack: identifying and acquiring strategic land positions near existing transmission infrastructure, developing dedicated power generation and delivery systems, navigating complex grid interconnection processes, integrating renewable energy solutions at scale, and preparing sites engineered specifically for AI-era computing demands. Contact our team to discuss how we can support your AI infrastructure requirements with proven energy campus solutions.